Posts Tagged ‘philosophy of science’

“Brains exist because the distribution of resources necessary for survival and the hazards that threaten survival vary in space and time”*…

And, it seems, they not only evolve, but in ways and with a frequency we’ve only just begun to appreciate. It’s long been noted that evolution seems to have a thing for “carcinization”– crabs have evolved separately at least five times. (Oh, and apparently also for anteaters…) Recent findings hint that evolution might have the same sort of jones for the brain. Amy Maxmen reports…

Our brains, perched atop a network of nerve cells that ascend the length of our bodies, are thought to have arisen once in an animal hundreds of millions of years ago and then evolved over time. However, new findings suggest instead that brains and nervous systems originated multiple times from scratch.

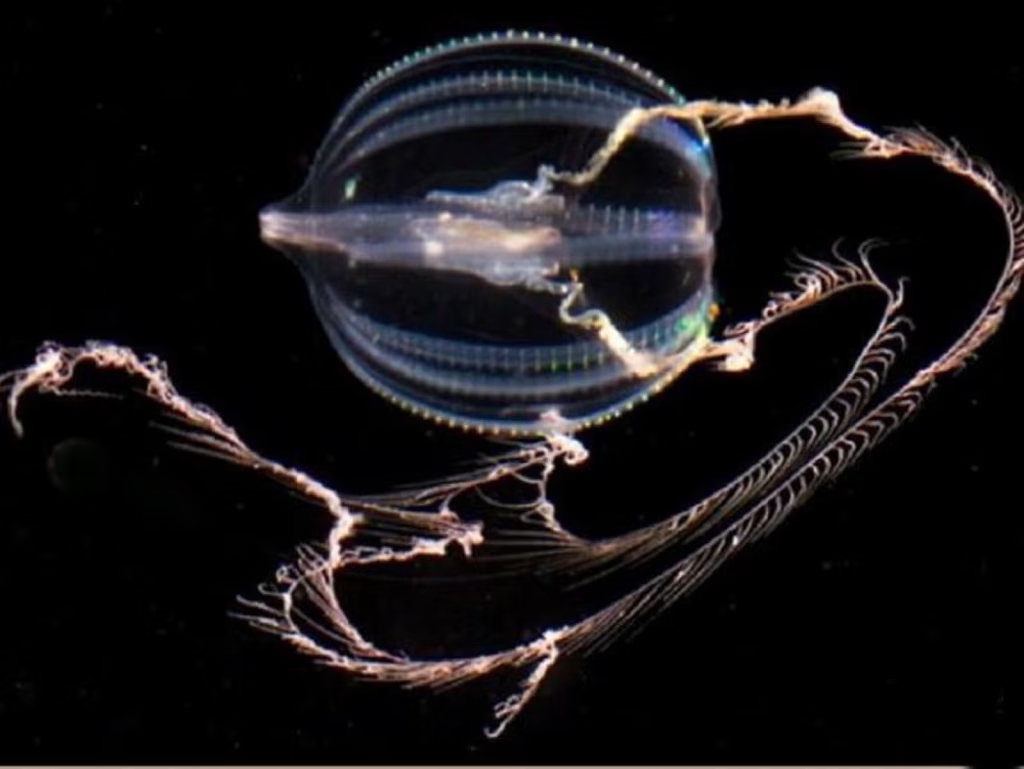

The findings, published today in Nature, highlight an ancient and gelatinous marine predator called a comb jelly [pictured at top]. Unlike pulsating jellyfish, comb jellies swim by “rowing” their many hair-like cilia, which are arranged in rows called combs. They possess rudimentary brains and sophisticated nervous systems replete with elongated cells that communicate through synapses much like our own. Some comb jellies show mirror-like bilateral symmetry, as do we. And like most animals, their muscles derive from a middle tissue layer, which does not exist in jellyfish or sponges, another ancient type of aquatic creature.

So it’s little wonder that biologists have long placed the comb jelly group close to worms, flies, and humans on the evolutionary tree of life; sponges emerge at the base, meaning that this group appeared first. In this traditional view, complex body parts like the brain and muscles arose gradually, and only once, since those parts look similar across related animals, and the chances of that same evolutionary process being repeated seems slim.

But this scenario was shaken by a report in Science last year, which suggested that the comb jelly group emerged before jellyfish and even the brainless, muscle-less sponges, more than 550 million years ago.

Some biologists doubted the rearrangement because it implied two equally uncomfortable possibilities: that the ancestor of all living animals had true muscles and a rudimentary brain, and then sponges and jellyfish lost those parts without a trace; or that the great animal ancestor was simple, and comb jellies evolved separately from all the other animals, yet ended up with rather similar nervous systems, muscles, and bilateral symmetry. When paleontologist Graham Budd heard the news last year, he said, “It is effectively saying animals evolved twice. Frankly, I’m not ready to believe it.”

Without a time machine, it’s impossible to know what our great ancestor looked like. However, today’s report adds more support to the notion that she was simple and comb jellies independently evolved their complex body parts. Leonid Moroz, a neurobiologist at the University of Florida’s Whitney Laboratory for Marine Bioscience, and his colleagues confirm comb jellies’ position below sponges at the base of the evolutionary tree with an analysis of genetic sequences from 11 comb jelly species…

… In an essay for Nautilus called “Evolution, You’re Drunk,” I described how hypotheses entrenched in the notion that evolution leads toward increasing complexity have recently begun to teeter. Now Moroz’s study adds another shove. It seconds the finding that simple sponges, long placed at the base of the evolutionary tree, actually evolved after the sophisticated comb jelly group arose. The story of how complexity evolves is more complex than scientists realized.

Furthermore, the brain—the epitome of complexity—seems to have sprouted up at least twice over evolutionary time. This clashes with the traditional notion that complex, multifaceted features come about in a very specific way, and each emerges just one time. “What everyone has said about complexity is wrong,” Moroz says. “It can happen more than once.”

Finding that comb jellies independently arrived at similar ends as other animals might also have surprised the late paleontologist Stephen Jay Gould, who famously doubted that animals would look the same today if the world were to begin again—if we could replay “the tape of life.”

Is such convergence in design a coincidence? Probably not, guesses Andreas Hejnol, an evolutionary developmental biologist at the Sars International Centre for Marine Molecular Biology in Norway. “If you need a fast communication system, it helps to have extended cells that communicate through chemicals,” he says. In other words, the structure of the nervous system reflects its function. So if intelligent life exists elsewhere in the universe, it’s not too far a stretch to think it could possess a brain comprised of trillions of neurons. Hejnol asks, “How else could it be?”…

The mysterious mechanism of evolution: “Evolution May Be Drunk, But It’s Serious About Making Brains,” from @amymaxmen.bsky.social in @nautil.us.

* John M. Allman, Evolving Brains

###

As we contemplate the changing comprehension of cerebra, we might send thoughtful birthday greetings to Sir Karl Raimund Popper; he was born on this date in 1902. One of the greatest philosophers of science of the 20th century, Popper is best known for his rejection of the classical inductivist views on the scientific method, in favor of empirical falsification: a theory in the empirical sciences can never be proven, but it can be falsified, meaning that it can and should be scrutinized by decisive experiments. (Or more simply put, whereas classical inductive approaches considered hypotheses false until proven true, Popper reversed the logic: conclusions drawn from an empirical finding are true until proven false.)

Popper was also a powerful critic of historicism in political thought, and (in books like The Open Society and Its Enemies and The Poverty of Historicism) an enemy of authoritarianism and totalitarianism (in which role he was a mentor to George Soros).

“The advance of genetic engineering makes it quite conceivable that we will begin to design our own evolutionary progress”*…

The obligations of a multi-day meeting (and the travel involved) mean that, from this issue, (R)D will be on pause until February 12 or 13 (depending on how connections play out…)

… and indeed the evolutionary progress of others species. But, Deputy Co-chair of the Nuffield Council on Bioethics Melanie Challenger asks, have we been sufficiently thoughful about the implications of this power?…

In 2016, Klaus Schwab announced that we had entered the Fourth Industrial Revolution. This is the era of the industrialization of biology, the leveraging of technologies to modify biological materials to meet human goals. While the first two Industrial Revolutions exploited energy and materials and the Third exploited digital information, the current revolution is a direct manipulation of life-forms and life’s substances.

The signature invention of this new era is CRISPR, dubbed “genetic scissors.” CRISPR is a ground-breaking method of making precise changes to DNA for a wide range of possible uses from disease reduction and elimination to the eradication of “pest” species and increases in the productivity of farmed animals. CRISPRs (the best-known system being CRISPR-Cas9) originate in RNA-based bacterial defense systems. Naturally occurring in species of bacteria, the Cas9 enzyme cuts the genomes of bacteriophages (viruses that will attack a bacterium), saving a record for defense against future infections. Scientists realized that this immunological strategy could be coopted to innovate a general tool for cutting DNA.

The optimism among those that seek to utilize these tools has been palpable for some time. As noted by the researchers at The Roslin Institute, creators of Dolly the Sheep, the world’s first cloned mammal: “Until recently, we have only been able to dream of…the ability to induce precise insertions or deletions easily and efficiently in the germline of livestock. With the advent of genome editors this is now possible.”

But the technologies of this new industrial era present ethical dilemmas and unknown consequences. What will it take to ensure that this revolution avoids worsening the enormous challenges we already face, especially from biodiversity loss and climate change? How can we get the balance right between the benefits and risks of human inventiveness?

In the 1980s, tech theorist David Collingridge presented his eponymous dilemma for those seeking to control potentially disruptive technologies. First, there is an “information problem” in which significant impacts are often invisible until the technology is already in use. Second, there is a “power problem” in which the technology becomes difficult to shape, regulate or scale back once it has become integrated in our lives. If we are going to navigate the Fourth Industrial Revolution successfully, we need to examine our use of CRISPR through the Collingridge dilemma.

The investors and engineers of the first industrial revolutions in the nineteenth century provide a vivid example of the information problem. They hoped that innovations like the combustion engine would unlock efficiency across multiple human sectors, from transportation to logistics to tourism. Such optimism was not unwarranted. Yet, as Collingridge’s dilemma suggests, it is easier to picture gains than to predict trouble. Building road systems and infrastructure carved capital movements into the landscape, symbolising freedom and the flow of wealth and creativity. Yet the striking visual parallels with our circulatory system did not stimulate anyone to forecast the ninety per cent of people today who are exposed to unsafe pollution levels from traffic or the associated health burdens from heart and lung disease to asthma. Nobody then foresaw the yearly deaths of two billion or so non-human vertebrates on our roads today, or that high traffic areas would cause localised declines in insect abundance of at least a quarter and, in some studies, as much as eighty per cent.

And, of course, most calamitous of all, there is climate change. Traffic emissions account for a fifth of all contributions to global warming. Yet the idea that a profitable and efficient machine like the combustion engine might precede devastating shifts in temperature and weather patterns was scarcely conceivable at the time. Now, it is a near ubiquitous feature of our understanding of the world.

When it comes to the engineering of biology, a similar information problem abounds. Not only is our understanding of biological life incomplete, but we know little about what the industrial processes that we are advancing inside the cells of organisms will do. The changes are both physically and ethically occluded. The ramifications of this and other related biotechnologies are not only rendered uncertain by the inherently complex nature of biological systems but are largely inaccessible to our imaginations.

We must struggle with the radical character of the industrialization of biology. Gene drives (a tool to increase the likelihood of passing on a gene) can weaponize the bodies and reproductive strategies of organisms to bias evolution in a directed way. Artificial chimeric organisms (those composed of cells from more than one species) mix and match biological traits and functions to bring about beings that wouldn’t occur otherwise, transforming autonomous organisms into useful parts for plug and play. But while evolutionary processes will sift those forms and strategies that most benefit future organisms, our acts of creation primarily benefit us alone. Survival of the fittest gives way to the contrivance of the functional.

Yet, despite the disruptive nature of these technologies, CRISPR is already entrenched in our research and economic landscape: here is the power problem of our new technology. The efficiency of modern versions of CRISPR has allowed the technology to pick up users fast. It is now a commonplace tool in labs around the world – with uses amplified during the pandemic – and continues to be utilized in ethically provocative trials, including the cloning of mammal species. CRISPR has been normalised by stealth.

This largely uncontested rollout has been enabled by biases in the evaluation of who is at risk. Put bluntly, humans worry about humans, and take risks to non-humans less seriously. As such, there are vastly different acceptance thresholds for certain kinds of uses and these can be exploited by those that seek to deregulate or profit from the technologies…

… This discrepancy is evident in the anxieties of Jennifer Doudna, one of the Nobel-winning scientists who made the CRISPR breakthrough. In her book, A Crack in Creation, she writes of a dream in which Hitler appears to her with the face of a pig and questions her excitedly about the power she has unleashed. Doudna’s anxieties relate not to the pigs of her dream (who are subject to a wide range of CRISPR applications) but to the potential of eugenics re-emerging in human societies. Her dream reflects not only the inevitability that any technology such as this will be equal parts destruction to rewards, but also that we must confront uncomfortable ideas about what it is to be a creature as much as a creator. Recognizing that these technologies work in the bodies of all biological beings, including humans, is a continual assault on the reasoning behind a hard moral border between us and them.

At present, the lives of non-human animals are the experimental landscape for our technologies. Their powerlessness to protest the uses of their bodies, wombs, physical materials, or futures leaves them vulnerable to being the test sites for a wide range of possible human applications. As a direct consequence of the serviceability of the bodies of organisms, CRISPR has been integrated into our world with little fanfare, directly facilitating the power problem that will, eventually, impact us too. Given Collingridge’s dilemma, what concepts and strategies could help us reduce the risks from CRISPR?

The first thing we need is a new definition of pollution. When it comes to combustion engines and other technologies of the first industrial revolutions, pollution is by far the most consequential harm. Direct impacts include the release of particulate matter or chemical compounds like nitrogen oxides or carbon dioxide into the atmosphere. Pollution from traffic has an immediate impact, especially fifty to one hundred metres from the roadside, with effects that we can measure, such as reduced growth rates or leaf damage in plants, or changes to soil chemistry and nutrient availability. On the other hand, long term effects of emissions, such as global warming, or the sustained impacts of waste on organisms and ecosystems, have proven tricky to anticipate and even harder to hold in mind…

…What is curious about the Fourth Industrial Revolution is that while several branches of science are arming us with the evidence that justifies an expansion of the moral circle to encompass a larger range of organisms, other branches are cranking up the objectification and exploitation of life-forms. As a result, there’s an obvious gap. Without addressing this, most concepts of pollution will remain anthropocentric. This may prove a critical misstep…

A provocative argument that “Gene Editing is Pollution,” from @TheIdeasLetter. Eminently worth reading in full.

See also: “The Ethics and Security Challenge of Gene Editing” and “The great gene editing debate: can it be safe and ethical?“

* Isaac Asimov

###

As we ponder permuted progeny, we might send microbiological birthday greetings to Jacques Lucien Monod; he was born on this date in 1910. A biochemist, he shared (with with François Jacob and André Lwoff) the Nobel Prize in Physiology or Medicine in 1965, “for their discoveries concerning genetic control of enzyme and virus synthesis.”

But Monod, who became the director of the Pasteur Institute, also made significant contributions to the philosophy of science– in particular via his 1971 book (based on a series of his lectures) Chance and Necessity, in which he examined the philosophical implications of modern biology. The importance of Monod’s work as a bridge between the chance and necessity of evolution and biochemistry on the one hand, and the human realm of choice and ethics on the other, can be seen in his influence on philosophers, biologists, and computer scientists including Daniel Dennett, Douglas Hofstadter, Marvin Minsky, and Richard Dawkins… and as a context setter for the deliberations suggested above…

“The importance of experimental proof, on the other hand, does not mean that without new experimental data we cannot make advances”*…

Adam Becker explains why demanding that a theory is falsifiable or observable, without any subtlety, will hold science back…

The Viennese physicist Wolfgang Pauli suffered from a guilty conscience. He’d solved one of the knottiest puzzles in nuclear physics, but at a cost. ‘I have done a terrible thing,’ he admitted to a friend in the winter of 1930. ‘I have postulated a particle [the neutrino] that cannot be detected.’

Despite his pantomime of despair, Pauli’s letters reveal that he didn’t really think his new sub-atomic particle would stay unseen. He trusted that experimental equipment would eventually be up to the task of proving him right or wrong, one way or another. Still, he worried he’d strayed too close to transgression. Things that were genuinely unobservable, Pauli believed, were anathema to physics and to science as a whole.

Pauli’s views persist among many scientists today. It’s a basic principle of scientific practice that a new theory shouldn’t invoke the undetectable. Rather, a good explanation should be falsifiable – which means it ought to rely on some hypothetical data that could, in principle, prove the theory wrong. These interlocking standards of falsifiability and observability have proud pedigrees: falsifiability goes back to the mid-20th-century philosopher of science Karl Popper, and observability goes further back than that. Today they’re patrolled by self-appointed guardians, who relish dismissing some of the more fanciful notions in physics, cosmology and quantum mechanics as just so many castles in the sky. The cost of allowing such ideas into science, say the gatekeepers, would be to clear the path for all manner of manifestly unscientific nonsense.

But for a theoretical physicist, designing sky-castles is just part of the job. Spinning new ideas about how the world could be – or in some cases, how the world definitely isn’t – is central to their work. Some structures might be built up with great care over many years and end up with peculiar names such as inflationary multiverse or superstring theory. Others are fabricated and dismissed casually over the course of a single afternoon, found and lost again by a lone adventurer in the troposphere of thought.

That doesn’t mean it’s just freestyle sky-castle architecture out there at the frontier. The goal of scientific theory-building is to understand the nature of the world with increasing accuracy over time. All that creative energy has to hook back onto reality at some point. But turning ingenuity into fact is much more nuanced than simply announcing that all ideas must meet the inflexible standards of falsifiability and observability. These are not measures of the quality of a scientific theory. They might be neat guidelines or heuristics, but as is usually the case with simple answers, they’re also wrong, or at least only half-right.

alsifiability doesn’t work as a blanket restriction in science for the simple reason that there are no genuinely falsifiable scientific theories. I can come up with a theory that makes a prediction that looks falsifiable, but when the data tell me it’s wrong, I can conjure some fresh ideas to plug the hole and save the theory.

The history of science is full of examples of this ex post facto intellectual engineering…

[Becker recount’s The Story of Herschel’s discovery on Uranus, the challenge it posed to Newtonian gravity, and Einstein’s ultimately saving theory; then returns to Pauli and to Bohr’s attempts to use it to refute the principle of conservation of energy; and finally explores the disagreement among, Boltzmann, Maxwell and Clauisus (on the one hand) and Mach (on the other) over atomic theory. He then considers competing theories for similar outcomes (that’s to say, theories that are observationally identical)…]

… the choices we make between observationally identical theories have a big impact upon the practice of science. The American physicist Richard Feynman pointed out that two wildly different theories that have identical observational consequences can still give you different perspectives on problems, and lead you to different answers and different experiments to conduct in order to discover the next theory. So it’s not just the observable content of our scientific theories that matters. We use all of it, the observable and the unobservable, when we do science. Certainly, we are more wary about our belief in the existence of invisible entities, but we don’t deny that the unobservable things exist, or at least that their existence is plausible.

Some of the most interesting scientific work gets done when scientists develop bizarre theories in the face of something new or unexplained. Madcap ideas must find a way of relating to the world – but demanding falsifiability or observability, without any sort of subtlety, will hold science back. It’s impossible to develop successful new theories under such rigid restrictions. As Pauli said when he first came up with the neutrino, despite his own misgivings: ‘Only those who wager can win.’…

We need madcap ideas: “What is good science?,” from @FreelanceAstro in @aeonmag.

Apposite: Charles Sanders Peirce on “abduction”

* Carlo Rivelli, Reality Is Not What It Seems

###

As we ponder proof, we might spare a thought for Donald William Kerst; he died on this date in 1993. A physicist, he helped develop the experimental approach (and apparatus) that let Enrico Fermi confirm the existence of Pauli’s neutrino (among many other discoveries).

Kerst specialized in plasma physics, and worked on advanced particle accelerator concepts (accelerator physics). He developed the Betatron (1940), the first device to accelerate electrons (“beta particles”) to speeds high enough to have sufficient momentum to produce nuclear transformations in atoms. It influenced all subsequent particle accelerators.

You must be logged in to post a comment.