Posts Tagged ‘evolution’

“Brains exist because the distribution of resources necessary for survival and the hazards that threaten survival vary in space and time”*…

And, it seems, they not only evolve, but in ways and with a frequency we’ve only just begun to appreciate. It’s long been noted that evolution seems to have a thing for “carcinization”– crabs have evolved separately at least five times. (Oh, and apparently also for anteaters…) Recent findings hint that evolution might have the same sort of jones for the brain. Amy Maxmen reports…

Our brains, perched atop a network of nerve cells that ascend the length of our bodies, are thought to have arisen once in an animal hundreds of millions of years ago and then evolved over time. However, new findings suggest instead that brains and nervous systems originated multiple times from scratch.

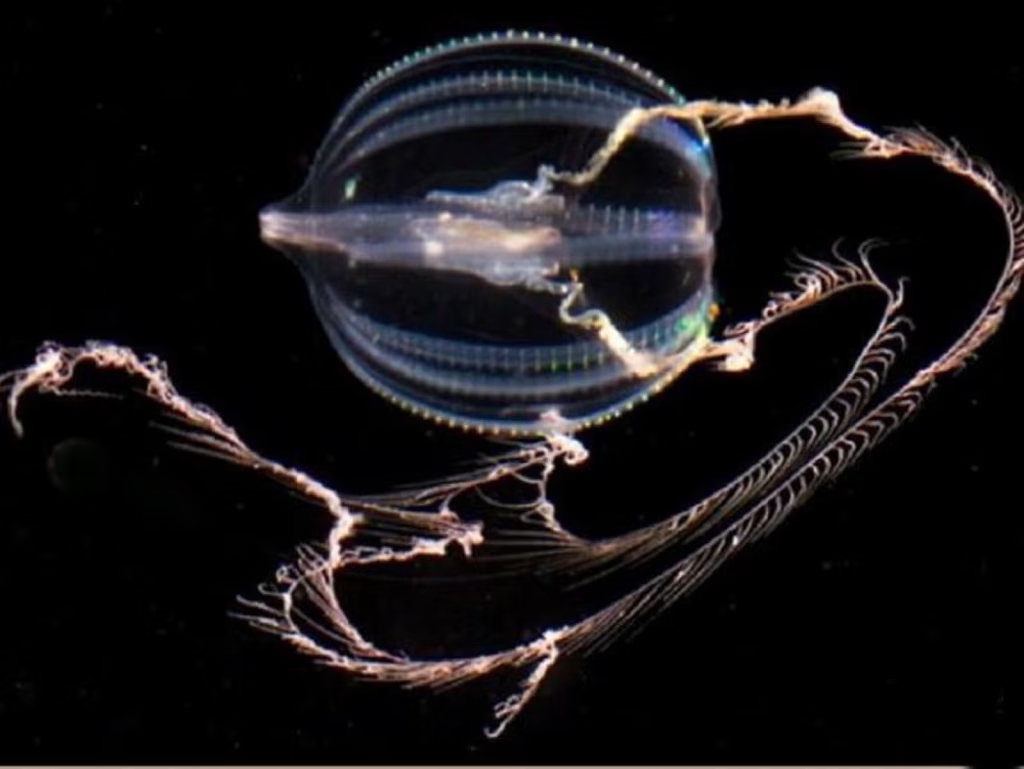

The findings, published today in Nature, highlight an ancient and gelatinous marine predator called a comb jelly [pictured at top]. Unlike pulsating jellyfish, comb jellies swim by “rowing” their many hair-like cilia, which are arranged in rows called combs. They possess rudimentary brains and sophisticated nervous systems replete with elongated cells that communicate through synapses much like our own. Some comb jellies show mirror-like bilateral symmetry, as do we. And like most animals, their muscles derive from a middle tissue layer, which does not exist in jellyfish or sponges, another ancient type of aquatic creature.

So it’s little wonder that biologists have long placed the comb jelly group close to worms, flies, and humans on the evolutionary tree of life; sponges emerge at the base, meaning that this group appeared first. In this traditional view, complex body parts like the brain and muscles arose gradually, and only once, since those parts look similar across related animals, and the chances of that same evolutionary process being repeated seems slim.

But this scenario was shaken by a report in Science last year, which suggested that the comb jelly group emerged before jellyfish and even the brainless, muscle-less sponges, more than 550 million years ago.

Some biologists doubted the rearrangement because it implied two equally uncomfortable possibilities: that the ancestor of all living animals had true muscles and a rudimentary brain, and then sponges and jellyfish lost those parts without a trace; or that the great animal ancestor was simple, and comb jellies evolved separately from all the other animals, yet ended up with rather similar nervous systems, muscles, and bilateral symmetry. When paleontologist Graham Budd heard the news last year, he said, “It is effectively saying animals evolved twice. Frankly, I’m not ready to believe it.”

Without a time machine, it’s impossible to know what our great ancestor looked like. However, today’s report adds more support to the notion that she was simple and comb jellies independently evolved their complex body parts. Leonid Moroz, a neurobiologist at the University of Florida’s Whitney Laboratory for Marine Bioscience, and his colleagues confirm comb jellies’ position below sponges at the base of the evolutionary tree with an analysis of genetic sequences from 11 comb jelly species…

… In an essay for Nautilus called “Evolution, You’re Drunk,” I described how hypotheses entrenched in the notion that evolution leads toward increasing complexity have recently begun to teeter. Now Moroz’s study adds another shove. It seconds the finding that simple sponges, long placed at the base of the evolutionary tree, actually evolved after the sophisticated comb jelly group arose. The story of how complexity evolves is more complex than scientists realized.

Furthermore, the brain—the epitome of complexity—seems to have sprouted up at least twice over evolutionary time. This clashes with the traditional notion that complex, multifaceted features come about in a very specific way, and each emerges just one time. “What everyone has said about complexity is wrong,” Moroz says. “It can happen more than once.”

Finding that comb jellies independently arrived at similar ends as other animals might also have surprised the late paleontologist Stephen Jay Gould, who famously doubted that animals would look the same today if the world were to begin again—if we could replay “the tape of life.”

Is such convergence in design a coincidence? Probably not, guesses Andreas Hejnol, an evolutionary developmental biologist at the Sars International Centre for Marine Molecular Biology in Norway. “If you need a fast communication system, it helps to have extended cells that communicate through chemicals,” he says. In other words, the structure of the nervous system reflects its function. So if intelligent life exists elsewhere in the universe, it’s not too far a stretch to think it could possess a brain comprised of trillions of neurons. Hejnol asks, “How else could it be?”…

The mysterious mechanism of evolution: “Evolution May Be Drunk, But It’s Serious About Making Brains,” from @amymaxmen.bsky.social in @nautil.us.

* John M. Allman, Evolving Brains

###

As we contemplate the changing comprehension of cerebra, we might send thoughtful birthday greetings to Sir Karl Raimund Popper; he was born on this date in 1902. One of the greatest philosophers of science of the 20th century, Popper is best known for his rejection of the classical inductivist views on the scientific method, in favor of empirical falsification: a theory in the empirical sciences can never be proven, but it can be falsified, meaning that it can and should be scrutinized by decisive experiments. (Or more simply put, whereas classical inductive approaches considered hypotheses false until proven true, Popper reversed the logic: conclusions drawn from an empirical finding are true until proven false.)

Popper was also a powerful critic of historicism in political thought, and (in books like The Open Society and Its Enemies and The Poverty of Historicism) an enemy of authoritarianism and totalitarianism (in which role he was a mentor to George Soros).

“An understanding of the natural world, and what’s in it is a source of not only great curiosity but great fulfillment”*…

Ah yes, but in what does that understanding consist? John Long considers the competing frameworks of Linnaeus and Buffon…

The modern science biography must hold back no punches in its mission to represent the subject’s life, equally celebrating their great works while including their personal shortcomings.

Jürgen Neffe’s Einstein: A Biography (2005) and Dava Sobel’s The Elements of Marie Curie (2024) are wonderful examples of this style. Such books succeed in clearly explaining the complex science of their subject’s work for non-scientific readers, enabling a deep appreciation of their achievements and bringing them to life as rounded, flawed humans.

The modern science biography must hold back no punches in its mission to represent the subject’s life, equally celebrating their great works while including their personal shortcomings.

Jürgen Neffe’s Einstein: A Biography (2005) and Dava Sobel’s The Elements of Marie Curie (2024) are wonderful examples of this style. Such books succeed in clearly explaining the complex science of their subject’s work for non-scientific readers, enabling a deep appreciation of their achievements and bringing them to life as rounded, flawed humans.

Jason Roberts’ Every Living Thing – The Great and Deadly Race to Know all Life is another of these rare works. This engrossing, precisely researched book focuses on two central characters born in the same year: Carl Linnaeus (1707-1778), a Swede, and Frenchman Georges-Louis LeClerc, the Compte de Buffon (1707-1788), better known as just Buffon.

Roberts’ book won the 2025 Pulitzer Prize for biography. His writing pulls the reader effortlessly through the story, revealing delightful, unexpected twists and turns in the two men’s complex and disparate lives. Each worked diligently to reach a level of global notoriety for their many published books. Both are revered in the natural history world today.

Linnaeus, a biologist and physician, is known for his system of hierarchical classification: how all living things comprise a genus and species, (we humans are Homo sapiens), which fit into families, orders, classes and so on. (A good many intermediate ranks were added later). While his work has been hugely influential, Linnaeus is portrayed by Roberts at times as being lazy, vain and unethical.

Linnaeus was primarily driven to be the first to name new species. Buffon was working on a grand thesis of how all life’s organisms function and are related to one another. A wealthy count who inherited a vast fortune at the age of ten, Buffon trained as a lawyer but became fascinated by the trees that grew in his large garden.

Buffon is best known today for his extensive books on natural history and works on mathematics and cosmology. He calculated the Earth was much older than the Bible predicted and that life sprung from unorganised matter. He explored the relationships between organisms rather than how they were classified. His core work formed the basis for modern evolutionary theory.

Why was all this important? At the time, the task of classifying plants was vital to the growing economies of nations. Travellers to the far reaches of the globe brought back examples of economically valuable new species, like plant foods, medicinal plants or beautiful ornamental specimens.

The author’s central thesis is Linnaeus was not as brilliant as history paints him and Buffon was a far greater genius for his day.

Where does genius come from, Roberts asks? Is it inherent by birth, grown from an inspiring education, or is it something within that is nurtured by passion?

Both these brilliant men who made a lasting mark on science came from not very inspiring families. Nor did they excel at school or university. This story shows success in academic work is not just about intellect, but intimately tied to the ethics and morality of doing research…

Eminently worth reading in full: “How do we understand life on Earth? A prize-winning biography charts the tension between two types of science ‘genius’” from @theconversation.com.

* David Attenborough, who also observed, “We moved from being a part of nature to being apart from nature.”

###

As we noodle on knowing, we might send birthday greetings to Gregor Mendel; he was born on this date in 1822 (though some sources give the date as July 20). A botanist, geneticist, and monk, he pioneered in the study of heredity.

Mendel spent his adult life with the Augustinian monastery in Brunn, where as a plant experimenter, he was the first to lay the mathematical foundation of the science of genetics, in what came to be called Mendelism. Over the period 1856-63, Mendel grew and analyzed over 28,000 pea plants. He carefully studied for each their plant height, pod shape, pod color, flower position, seed color, seed shape and flower color. He made two very important generalizations from his pea experiments, known today as the Laws of Heredity, and coined the genetic terms recessiveness and dominance. He read a paper on his studies in 1865 to the Brünn Society for Natural Sciences in Moravia– but it lay unappreciated until 1900.

“Good night, sleep tight, don’t let the bedbugs bite”*…

As Rodrigo Pérez Ortega reports, that admonition has a very long history…

Long before rats roamed sewers and cockroaches lurked in kitchen corners, another unwelcome guest plagued early civilizations. A new genomic study published today in Biology Letters suggests that bedbugs—the blood-feeding insects that haunt our hotel stays—were the first urban pests, proving an itchy menace for tens of thousands of years.

“This is really amazing,” says Klaus Reinhardt, an evolutionary biologist at the Dresden University of Technology who was not involved in the new study. “I think the hypothesis is quite solid.” Still, other researchers quibble over whether bedbugs can indisputably claim that title.

Many species of bedbugs depend on us—and our blood—to survive, but long ago, their prey of choice was probably exclusively bats. Genetic evidence suggests that about 245,000 years ago, some bedbugs made the jump to early humans.

This split led to two genetically distinct bedbug lineages. One kept feeding on bats and today remains largely confined to caves and natural habitats in Europe and the Middle East. The other followed humans into modern dwellings. Exactly how that scenario played out remained a mystery, however. That’s why Warren Booth, an evolutionary biologist at the Virginia Polytechnic Institute and State University, and his team set out to study the genome of the common bedbug (Cimex lectularius) in depth…

… [Their findings make] bedbugs strong contenders for the title of the world’s first true urban pest that relies solely on humans, the researchers claim. Unlike more recent urban interlopers that feast on our stored food and enjoy our cozy homes—like the German cockroach (Blattella germanica), which formed a close association with humans just 2000 years ago, or the black rat (Rattus rattus), whose commensal relationship began about 5000 years ago—bedbugs may have started parasitizing humans just as our ancestors started building permanent settlements…

… the new findings underscore how humans have shaped the evolution of urban insects. Compared with their bat-feeding cousins, human-feeding bedbugs are smaller, less hairy, and have larger limbs—adaptations likely suited to navigating smooth walls and synthetic bedding. Today’s bedbugs also carry many DNA mutations linked to insecticide resistance, a relatively recent trait that reflects the pressures of modern pest control. “They’re a remarkable yet horrible species,” Booth says.

Understanding how these pests evolved together with us could help improve strategies for controlling them, especially as cities continue to grow—and as bedbugs now feed on the poultry we raise. Further research could also help us understand how our own immune system evolved, since some people develop allergies for bedbug bites. As a start, Booth and his team are analyzing centuries-old bedbug specimens in museums, to track how the insects’ genomes—and populations—have evolved over the past century alongside us.

“There’s a pretty intimate association, whether we like it or not,” Booth says. “That’s not going away anytime soon.”…

“Bedbugs may be the first urban pest,” from @rpocisv.bsky.social in @science.org.

* common children’s rhyme

###

As we contemplate the chronicle of a co-evolved curse, we might recall that it was on thus date in 1789 that Richard Kirwan published his essay in support of the phlogiston theory (the belief, that dates to alchemical times, in the existence of a fire-like element (dubbed “phlogiston”) contained within combustible bodies and released during burning. Kirwan was among the last of its advocates.

A well-regarded scientist in the late 18th and early 19th centuries, Kirwan met and corresponded with Black, Lavoisier, Priestley, and Cavendish. Indeed, while scientific history remembers him as a defender of an incorrect theory, his work probably spurred Priestley and Lavoisier, who respectively discovered and named the actual elemental agent of combustion, oxygen.

But Kirwan is also remembered for a personal eccentricity (one of many) relevant to this post: he hated bugs (especially flies). He paid his servant a bounty for each one they killed.

“I think the next century will be the century of complexity”*…

… and as Philip Ball reports, a team of scientists at Carnegie Science agrees…

In 1950 the Italian physicist Enrico Fermi was discussing the possibility of intelligent alien life with his colleagues. If alien civilizations exist, he said, some should surely have had enough time to expand throughout the cosmos. So where are they?

Many answers to Fermi’s “paradox” have been proposed: Maybe alien civilizations burn out or destroy themselves before they can become interstellar wanderers. But perhaps the simplest answer is that such civilizations don’t appear in the first place: Intelligent life is extremely unlikely, and we pose the question only because we are the supremely rare exception.

A new proposal by an interdisciplinary team of researchers challenges that bleak conclusion. They have proposed nothing less than a new law of nature, according to which the complexity of entities in the universe increases over time with an inexorability comparable to the second law of thermodynamics — the law that dictates an inevitable rise in entropy, a measure of disorder. If they’re right, complex and intelligent life should be widespread.

In this new view, biological evolution appears not as a unique process that gave rise to a qualitatively distinct form of matter — living organisms. Instead, evolution is a special (and perhaps inevitable) case of a more general principle that governs the universe. According to this principle, entities are selected because they are richer in a kind of information that enables them to perform some kind of function.

This hypothesis, formulated by the mineralogist Robert Hazen [here] and the astrobiologist Michael Wong [here] of the Carnegie Institution in Washington, D.C., along with a team of others, has provoked intense debate. Some researchers have welcomed the idea as part of a grand narrative about fundamental laws of nature. They argue that the basic laws of physics are not “complete” in the sense of supplying all we need to comprehend natural phenomena; rather, evolution — biological or otherwise — introduces functions and novelties that could not even in principle be predicted from physics alone. “I’m so glad they’ve done what they’ve done,” said Stuart Kauffman, an emeritus complexity theorist at the University of Pennsylvania. “They’ve made these questions legitimate.”…

[Ball explains the origin and outline of Hazen’s and Wong’s conjecture, explores the critiques– among them, that it’s not clear how to test the hypothesis– and examines the resonant work on Assembly Theory being done by Lee Cronin and Sara Walker…]

… Wong said there is more work still to be done on mineral evolution, and they hope to look at nucleosynthesis and computational “artificial life.” Hazen also sees possible applications in oncology, soil science and language evolution. For example, the evolutionary biologist Frédéric Thomas of the University of Montpellier in France and colleagues have argued that the selective principles governing the way cancer cells change over time in tumors are not like those of Darwinian evolution, in which the selection criterion is fitness, but more closely resemble the idea of selection for function from Hazen and colleagues.

Hazen’s team has been fielding queries from researchers ranging from economists to neuroscientists, who are keen to see if the approach can help. “People are approaching us because they are desperate to find a model to explain their system,” Hazen said.

But whether or not functional information turns out to be the right tool for thinking about these questions, many researchers seem to be converging on similar questions about complexity, information, evolution (both biological and cosmic), function and purpose, and the directionality of time. It’s hard not to suspect that something big is afoot. There are echoes of the early days of thermodynamics, which began with humble questions about how machines work and ended up speaking to the arrow of time, the peculiarities of living matter, and the fate of the universe…

A new suggestion that complexity increases over time, not just in living organisms but in the nonliving world, promises to rewrite notions of time and evolution: “Why Everything in the Universe Turns More Complex,” from @philipcball.bsky.social and @quantamagazine.bsky.social.

See also: Benjamin Bratton‘s explantion of the work he and his collegues are doing at a new institute at UCSD: “Antikythera.” See his recent Long Now Foundation talk on this same subject here.

* Stephen Hawking

###

As we celebrate complication, we might spare a thought for G. N. Ramachandran (Gopalasamudram Narayanan Ramachandran); he died on this date in 2001. A biophysicist, he discovered the triple helical “coiled coil” structure of the collagen molecule, among other remarkable contributions to structural biology.

Ramachandran was a master of X-ray crystallography, and with his colleagues, constructed space filling models of protein molecules. He devised the Ramachandran Plot, a method to diagram the conformation of polypeptides, polysaccharides and polynucleotides– which remains the international standard to describe protein structures.

Ramachandran, inspired by the ancient Syaad Nyaaya (doctrine of “may be”), also explored artificial intelligence. He developed the Boolean Vector Matrix Formulation which has important application in writing software for AI.

You must be logged in to post a comment.