Posts Tagged ‘Brain’

“Eureka!”*…

Whence insight?…

New research published in BMC Psychology suggests that the structural wiring of the brain may play a significant role in how people solve problems through sudden insight. The study indicates that individuals who frequently experience “Aha!” moments tend to have less organized white matter pathways in specific language-processing areas of the left hemisphere. These findings imply that a slightly less rigid neural structure might allow the brain to relax its focus, enabling the unique connections required for creative breakthroughs.

For decades, scientists have studied the phenomenon of insight, which occurs when a solution to a problem enters awareness suddenly and unexpectedly. This is often contrasted with analytical problem solving, which involves a deliberate and continuous step-by-step approach.

While previous studies using functional MRI and EEG have mapped the brain activity that occurs during these moments, there has been little understanding of the underlying physical structure that supports them. The researchers behind the new study aimed to determine if stable differences in white matter—the bundles of nerve fibers that connect different brain regions—predict an individual’s tendency to solve problems via insight.

“For over two decades, neuroscience has mapped what happens in the brain during these moments using EEG and fMRI. We know from prior research that insight feels sudden, tends to be accurate, and involves distinct functional activation patterns — including a burst of activity in the right temporal cortex just before the solution reaches awareness,” said study authors Carola Salvi of the Cattolica University of Milan and Simone A. Luchini of Pennsylvania State University.

“But one major question remained open: what structural features of the brain might make some people more likely to experience insight in the first place?”

“Most previous white matter studies of creativity did not specifically focus on Aha! experiences. They measured how many problems people solved, or how creatively, not how they solved them (with or without these sudden epiphanies). Yet insight and non insight solutions are phenomenologically and neurally distinct processes.”

White matter acts as the communication infrastructure of the brain, transmitting signals between distant regions. To examine this structure, the researchers employed a technique called Diffusion Tensor Imaging (DTI). This method tracks the movement of water molecules within brain tissue.

“We wanted to know whether stable white matter microstructure — the brain’s anatomical wiring — differs depending on whether someone tends to solve problems through sudden insight or through deliberate step-by-step reasoning (non insight solutions),” Salvi and Luchini explained. “Diffusion tensor imaging (DTI) allowed us to examine this structural dimension directly.”…

… The findings offered a counterintuitive perspective on brain connectivity. The analysis revealed that participants who solved more problems via insight exhibited lower fractional anisotropy in the left hemisphere’s dorsal language network. This network includes the arcuate fasciculus and the superior longitudinal fasciculus, pathways that connect brain regions responsible for language production, comprehension, and semantic processing.

“One striking finding was that people who more frequently experienced insight showed lower fractional anisotropy in specific left-hemisphere dorsal language pathways, including parts of the arcuate fasciculus and superior longitudinal fasciculus,” Salvi and Luchini told PsyPost.

“At first glance, that might sound counterintuitive. Fractional anisotropy is often interpreted as reflecting the coherence or organization of white matter pathways. In many cognitive domains, higher fractional anisotropy is associated with better performance.”

“But insight may operate differently. The left hemisphere is typically involved in focused, fine-grained semantic processing — narrowing in on dominant interpretations of words and concepts. The right hemisphere, by contrast, is thought to support broader, ‘coarse’ semantic coding — integrating more distantly related ideas. Slightly lower fractional anisotropy in left dorsal language pathways may reflect a system that is less tightly constrained by dominant interpretations.

“In other words, it may allow a partial ‘release’ from habitual patterns of thought and it is in line with other studies where lesions in the left frontotemporal regions have been shown to increase artistic creativity,” Salvi and Luchini continued. “Taken together, these findings imply that left hemispheric regions play a regulatory role in creativity and that their disruption lifts this constraint, thus promoting novel ideas.”…

This somehow makes your correspondent feel better about his messy desk…

More at: “Neuroscientists identify a unique feature in the brain’s wiring that predicts sudden epiphanies,” from @psypost.bsky.social.

The journal paper: “The white matter of Aha! moments.”

* Archimedes (after one of his famous insights)

###

As we ruminate on revelation, we might recall that it was on this date in 1939 that the college fad of swallowing live goldfish began at Harvard: a freshman named Lothrop Withington, Jr., reportedly bragged to his friends that he had once eaten a live fish. They bet him 10 bucks he couldn’t do it again. Perhaps because he was running for Class President, he took the challenge…

The moment of truth came on March 3, within the hallowed halls of Harvard. Standing in front of a crowd of grinning classmates and at least one Boston reporter, Withington dropped an ill-fated 3-inch goldfish into his mouth, gave a couple chews and swallowed. “The scales,” he later remarked, “caught a bit on my throat as it went down.”

Soon the word spread to other colleges. Other students began to take up the challenge, swallowing more and more goldfish each time to top the last record. By the time students were downing dozens of live, wriggling goldfish to uphold their school’s honor, the Massachusetts legislature stepped in and passed a law to “preserve the fish from cruel and wanton consumption.” The U.S. Public Health Service began to issue warnings that the goldfish could pass tapeworms and disease to swallowers. Within a few months of its start, the fad died out.

– Source

“If I only had a brain”*…

The estimable Brad DeLong ponders the Discovery Channel series Naked and Afraid, concluding that “the Scarecrow in The Wizard of Oz had a greatly exaggerated view of what he would have been able to do if he only had a brain”…

There is a shlock TV show, on the Discovery Channel, called “Naked & Afraid”.

In it, two humans are dropped into a wilderness somewhere, naked, with one and only one piece of technology each (usually something like a knife, a fire starter, or a fishing line). All around them are other mammals doing their mammal thing: living their lives, reproducing their populations, evolving to fit whatever niche they have found where they are. But the two humans dropped by themselves (well, they are surrounded by cameramen, sound technician, drivers, logistical support, and such who do not help and who stay out of the field of view) do not. Instead, the humans proceed, not too slowly, to start starving to death.

I am not being figurative or metaphorical…

[DeLong details the altogether dire deterioration and resulting ailments of two recent “contestents”…]

… Perhaps you just shrug your shoulders and say: “humans are relatively inept”… The other mammals out in the Amazon have been equipped by Darwin’s Daemon with teeth, claws, instincts, and brains that allow them to get into daily caloric balance. We don’t have much in the way of teeth and claws. We do have opposable thumbs. We do have big brains. They are supposed to compensate. But perhaps you shrug your shoulders and say: “they do not compensate very well”. For, out in the wilderness, Melissa Miller’s brain and thumbs failed at the one job for which Darwin’s Daemon gave them to us, for which other mammals’ teeth, claws, instincts, sprinting speed, dodging quickness, and much smaller and thus less energetically expensive brains largely suffice.

The rule: a smart, knowledgeable human (or two) in the wilderness naked should be afraid: they are highly likely to start starving to death.

And yet: Somehow we are here. We have not all yet been eaten. We have been evolved evolved. Our ancestors survived, and reproduced.

Our ancestors started to come down from the trees about seven million years ago. That was when we left the ancestors of our chimpanzee cousins still up in the forest canopy.

By five million years ago, the ardipitheci were walking upright when they had to, with much smaller and less sexually-dimorphic canines, but as of them with no signs of fire or stone‑tool use or indeed of semi-systematic butchery. Their brain cases were only 350cc, only 350 cubic centimeters. By 3.5 million years ago, the autralopitheci afarenses were habitually walking on two legs with their 450cc brain-cases. By 2.5 million years ago, the homines habiles with their Oldowan stone toolkit and 650cc brain-cases were around. And paleontologists judge they deserve our genus name: homo. By 1.8 million years ago, there were the homines erecti spreading out across the world, with their Acheulean handaxes, their endurance walking/running, and their 950cc brain-cases. When we look back 600,000 years ago, the world was then populated by the likes of the homines heidelbergenses: widely-controlled fire; complex hunting with tools like spears.

These people were not yet us: Their brain-cases were only 3/4 of the size of our brain-cases of 1350cc. They did not have organized big‑game hunting with spears, complex prepared‑core toolmaking techniques, long‑distance mobility, or evidence of our sustained and cumulative symbolic culture—cave art and engravings, personal ornaments, ritual burials, complex language‑supported planning, long‑distance exchange networks, composite tools made with adhesives, tailored clothing, or shelters. They did not have the final brain expansion, the globular skull, the reduced brow, or the chin.

And between 300,000 and 200,000 years ago there emerged people we definitely call us: homines sapientes, albeit “archaic”, with our brain-case size of 1350cc, but without the fully globular skull, the reduced brow, or the chin.

From a chimpanzee-sized brain one-quarter the size of ours five million years ago to our current state, our ancestors and then we have been evolved. And now we are here. So how can there have been so much selection pressure for larger brains when, even today, out in the wilderness they are insufficient to keep us, when naked individuals, from being hungry and afraid?

You know where I am going here. The answer of course, is simple: What is smart—what the brain is good for—is not each of our brains, but all of our brains thinking together. And the tools that we, and those who came before us, have made—tools that no one individual could make in a lifetime, and that embody all of that thinking-together one. Melissa Miller is an expert on knives, how to use them, and what to use them for. She could not make one from scratch.

From long-ago Acheulean handaxes to contemporary hunger in the Amazon, the throughline is simple: selection favored group knowledge and group production by a pecialized division of labor, not solo genius. Our edge not only was and is not claws or speed, it was and is not the ability to think up clever solutions to problems on the fly. Instead, it was pooled memory and anthology thinking-power, plus the division of labor that allows us to carve tools that contain the results of that collective thinking-power…

“Does Each of Us Have a Big Enough Brain to Compensate for Our Lack of Fangs, Claws, Sprinting Speed, & Dodging Quickness?” from @delong.social.

* “the Scarecrow” in The Wizard of Oz

###

As we band together, we might recall that it was on this date in 1926 the Winnie-the-Pooh was first published…

The origin of the name of the bear that was stuffed with fluff began years before the book Winnie-the-Pooh by A.A. Milne [here] was published on this day in 1926. During the first World War, Canadian Lieutenant Harry Colebourn caught a bear and named her “Winnie” after his adopted hometown in Winnipeg, Manitoba. She was the brought to the London Zoo where Milne’s son, Christopher Robin would visit. [See also here.]

Christopher re-named his own teddy bear, Edward Bear, to Winnie-the-Pooh. His father named the characters in his book after Christopher’s stuffed animals including Piglet, Eeyore, Kanga, Roo and Tigger. (Mr. Milne added Owl and Rabbit).

In 1961, Walt Disney Productions bought the rights to the stories to create a series of cartoon shorts beginning with Winnie the Pooh and the Honey Tree which debuted in 1966. The last full-length animated movie from Disney, simply titled Winnie the Pooh, came out in theaters in 2011; the live action movie about the inspiration of the stories, Goodbye Christopher Robin by Fox Searchlight Pictures arrived in theaters in October of 2017 and Disney followed up with a live action/CGI about an adult Christopher Robin returning to the 100 Acre Wood in 2018.

– source

“Brains exist because the distribution of resources necessary for survival and the hazards that threaten survival vary in space and time”*…

And, it seems, they not only evolve, but in ways and with a frequency we’ve only just begun to appreciate. It’s long been noted that evolution seems to have a thing for “carcinization”– crabs have evolved separately at least five times. (Oh, and apparently also for anteaters…) Recent findings hint that evolution might have the same sort of jones for the brain. Amy Maxmen reports…

Our brains, perched atop a network of nerve cells that ascend the length of our bodies, are thought to have arisen once in an animal hundreds of millions of years ago and then evolved over time. However, new findings suggest instead that brains and nervous systems originated multiple times from scratch.

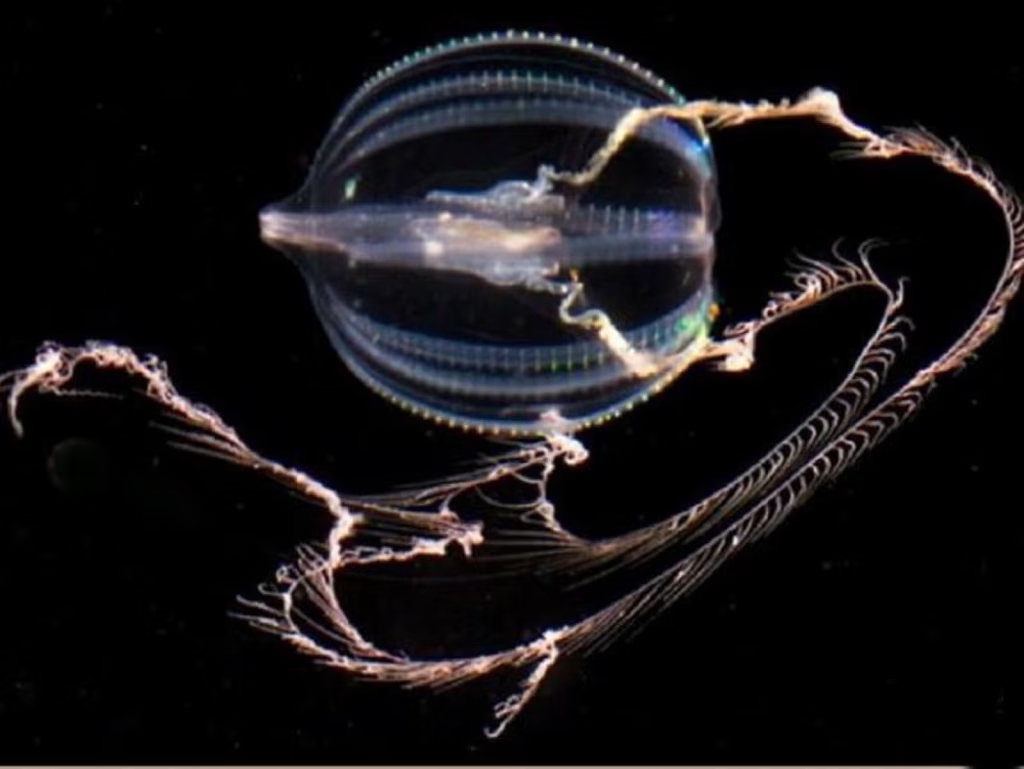

The findings, published today in Nature, highlight an ancient and gelatinous marine predator called a comb jelly [pictured at top]. Unlike pulsating jellyfish, comb jellies swim by “rowing” their many hair-like cilia, which are arranged in rows called combs. They possess rudimentary brains and sophisticated nervous systems replete with elongated cells that communicate through synapses much like our own. Some comb jellies show mirror-like bilateral symmetry, as do we. And like most animals, their muscles derive from a middle tissue layer, which does not exist in jellyfish or sponges, another ancient type of aquatic creature.

So it’s little wonder that biologists have long placed the comb jelly group close to worms, flies, and humans on the evolutionary tree of life; sponges emerge at the base, meaning that this group appeared first. In this traditional view, complex body parts like the brain and muscles arose gradually, and only once, since those parts look similar across related animals, and the chances of that same evolutionary process being repeated seems slim.

But this scenario was shaken by a report in Science last year, which suggested that the comb jelly group emerged before jellyfish and even the brainless, muscle-less sponges, more than 550 million years ago.

Some biologists doubted the rearrangement because it implied two equally uncomfortable possibilities: that the ancestor of all living animals had true muscles and a rudimentary brain, and then sponges and jellyfish lost those parts without a trace; or that the great animal ancestor was simple, and comb jellies evolved separately from all the other animals, yet ended up with rather similar nervous systems, muscles, and bilateral symmetry. When paleontologist Graham Budd heard the news last year, he said, “It is effectively saying animals evolved twice. Frankly, I’m not ready to believe it.”

Without a time machine, it’s impossible to know what our great ancestor looked like. However, today’s report adds more support to the notion that she was simple and comb jellies independently evolved their complex body parts. Leonid Moroz, a neurobiologist at the University of Florida’s Whitney Laboratory for Marine Bioscience, and his colleagues confirm comb jellies’ position below sponges at the base of the evolutionary tree with an analysis of genetic sequences from 11 comb jelly species…

… In an essay for Nautilus called “Evolution, You’re Drunk,” I described how hypotheses entrenched in the notion that evolution leads toward increasing complexity have recently begun to teeter. Now Moroz’s study adds another shove. It seconds the finding that simple sponges, long placed at the base of the evolutionary tree, actually evolved after the sophisticated comb jelly group arose. The story of how complexity evolves is more complex than scientists realized.

Furthermore, the brain—the epitome of complexity—seems to have sprouted up at least twice over evolutionary time. This clashes with the traditional notion that complex, multifaceted features come about in a very specific way, and each emerges just one time. “What everyone has said about complexity is wrong,” Moroz says. “It can happen more than once.”

Finding that comb jellies independently arrived at similar ends as other animals might also have surprised the late paleontologist Stephen Jay Gould, who famously doubted that animals would look the same today if the world were to begin again—if we could replay “the tape of life.”

Is such convergence in design a coincidence? Probably not, guesses Andreas Hejnol, an evolutionary developmental biologist at the Sars International Centre for Marine Molecular Biology in Norway. “If you need a fast communication system, it helps to have extended cells that communicate through chemicals,” he says. In other words, the structure of the nervous system reflects its function. So if intelligent life exists elsewhere in the universe, it’s not too far a stretch to think it could possess a brain comprised of trillions of neurons. Hejnol asks, “How else could it be?”…

The mysterious mechanism of evolution: “Evolution May Be Drunk, But It’s Serious About Making Brains,” from @amymaxmen.bsky.social in @nautil.us.

* John M. Allman, Evolving Brains

###

As we contemplate the changing comprehension of cerebra, we might send thoughtful birthday greetings to Sir Karl Raimund Popper; he was born on this date in 1902. One of the greatest philosophers of science of the 20th century, Popper is best known for his rejection of the classical inductivist views on the scientific method, in favor of empirical falsification: a theory in the empirical sciences can never be proven, but it can be falsified, meaning that it can and should be scrutinized by decisive experiments. (Or more simply put, whereas classical inductive approaches considered hypotheses false until proven true, Popper reversed the logic: conclusions drawn from an empirical finding are true until proven false.)

Popper was also a powerful critic of historicism in political thought, and (in books like The Open Society and Its Enemies and The Poverty of Historicism) an enemy of authoritarianism and totalitarianism (in which role he was a mentor to George Soros).

“The brain has corridors surpassing / Material place…”*

Our brains, Luiz Pessoa suggests, are much less like machines than they are like the murmurations of a flock of starlings or an orchestral symphony…

When thousands of starlings swoop and swirl in the evening sky, creating patterns called murmurations, no single bird is choreographing this aerial ballet. Each bird follows simple rules of interaction with its closest neighbours, yet out of these local interactions emerges a complex, coordinated dance that can respond swiftly to predators and environmental changes. This same principle of emergence – where sophisticated behaviours arise not from central control but from the interactions themselves – appears across nature and human society.

Consider how market prices emerge from countless individual trading decisions, none of which alone contains the ‘right’ price. Each trader acts on partial information and personal strategies, yet their collective interaction produces a dynamic system that integrates information from across the globe. Human language evolves through a similar process of emergence. No individual or committee decides that ‘LOL’ should enter common usage or that the meaning of ‘cool’ should expand beyond temperature (even in French-speaking countries). Instead, these changes result from millions of daily linguistic interactions, with new patterns of speech bubbling up from the collective behaviour of speakers.

These examples highlight a key characteristic of highly interconnected systems: the rich interplay of constituent parts generates properties that defy reductive analysis. This principle of emergence, evident across seemingly unrelated fields, provides a powerful lens for examining one of our era’s most elusive mysteries: how the brain works.

The core idea of emergence inspired me to develop the concept I call the entangled brain: the need to understand the brain as an interactionally complex system where functions emerge from distributed, overlapping networks of regions rather than being localised to specific areas. Though the framework described here is still a minority view in neuroscience, we’re witnessing a gradual paradigm transition (rather than a revolution), with increasing numbers of researchers acknowledging the limitations of more traditional ways of thinking…

Complexity, emergence, and consciousness: “The entangled brain” from @aeon.co. Read on for the provocative details.

* Emily Dickinson

###

As we think about thinking, we might send amibivalent birthday greetings to Robert Yerkes; he was born on this date in 1876. A psychologist, ethnologist, and primatologist, he is best remembered as a principal developer of comparative (animal) psychology in the U.S. (his book The Dancing Mouse (1908), helped established the use of mice and rats as standard subjects for experiments in psychology) and for his work in intelligence testing.

But in his later life, Yerkes began to broadcast his support for eugenics. These views are broadly considered specious– based on outmoded/incorrect racialist theories— by modern academics.

“The world of reality has its limits; the world of imagination is boundless”*…

Still, it’s useful to know the difference… and as Yasemin Saplakoglu explains, that’s a complex process– one that science takes very seriously…

As I sit at my desk typing up this newsletter, I can see a plant to my left, a water bottle to my right and a gorilla sitting across from me. The plant and bottle are real, but the gorilla is a product of my mind — and I intuitively know that this is true. That’s because my brain, like most people’s, has the ability to distinguish reality from imagination. If it didn’t, or if I had a condition that disrupts this distinction, I’d constantly see gorillas and elephants where they don’t exist.

Imagination is sometimes described as perception in reverse. When we look at an object, electromagnetic waves enter the eyes, where they are translated into neural signals that are then sent to the visual cortex at the back of the brain. This process generates an image: “plant.” With imagination, we start with what we want to see, and the brain’s memory and semantic centers send signals to the same brain region: “gorilla.”

In both cases, the visual cortex is activated. Recalling memories can also activate some of the same regions. Yet the brain can clearly distinguish between imagination, perception and memory in most cases (though it is still possible to get confused). How does it keep everything straight?

By probing the differences between these processes, neuroscientists are untangling how the human brain creates our experience. They’re finding that even our perception of reality is in many ways imagined. “Underneath our skull, everything is made up,” Lars Muckli, a professor of visual and cognitive neurosciences at the University of Glasgow, told me. “We entirely construct the world in its richness and detail and color and sound and content and excitement. … It is created by our neurons.”

To distinguish reality and imagination, the brain might have some kind of “reality threshold,” according to one theory. Researchers recently tested this by asking people to imagine specific images against a backdrop — and then secretly projected faint outlines of those images there. Participants typically recognized when they saw a real projection versus their imagined one, and those who rated images as more vivid were also more likely to identify them as real. The study suggested that when processing images, the brain might make a judgment on reality based on signal strength. If the signal is weak, the brain takes it for imagination. If it’s strong, the brain deems it real. “The brain has this really careful balancing act that it has to perform,” Thomas Naselaris, a neuroscientist at the University of Minnesota, told me. “In some sense it is going to interpret mental imagery as literally as it does visual imagery.”

Although recalling memories is a creative and imaginative process, it activates the visual cortex as if we were seeing. “It started to raise the question of whether a memory representation is actually different from a perceptual representation at all,” Sam Ling, a neuroscientist at Boston University, told me. A recent study looked to identify how memories and perceptions are constructed differently at the neurobiological level. When we perceive something, visual cues undergo layers of processing in the visual cortex that increase in complexity. Neurons in earlier parts of this process fire more precisely than those that get involved later. In the study, researchers found that during memory recall, neurons fired in a much blurrier way through all the layers. That might explain why our memories aren’t often as crisp as what we’re seeing in front of us…

“How Do Brains Tell Reality From Imagination?” from @yaseminsaplakoglu.bsky.social in @quantamagazine.bsky.social.

* Jean-Jacques Rousseau

###

As we parse perception, we might send mindful birthday greetings to a man whose work figures into the history of science’s struggle on this issue, Franz Brentano; he was born on this date in 1838. A philosopher and psychologist, his 1874 Psychology from an Empirical Standpoint, considered his magnum opus and is credited with having reintroduced the medieval scholastic concept of intentionality into contemporary philosophy and psychology.

Brentano also studied perception, with conclusions that prefigure the discussion above…

He is also well known for claiming that Wahrnehmung ist Falschnehmung (‘perception is misconception’) that is to say perception is erroneous. In fact he maintained that external, sensory perception could not tell us anything about the de facto existence of the perceived world, which could simply be illusion. However, we can be absolutely sure of our internal perception. When I hear a tone, I cannot be completely sure that there is a tone in the real world, but I am absolutely certain that I do hear. This awareness, of the fact that I hear, is called internal perception. External perception, sensory perception, can only yield hypotheses about the perceived world, but not truth. Hence he and many of his pupils (in particular Carl Stumpf and Edmund Husserl) thought that the natural sciences could only yield hypotheses and never universal, absolute truths as in pure logic or mathematics.

However, in a reprinting of his Psychologie vom Empirischen Standpunkte (Psychology from an Empirical Standpoint), he recanted this previous view. He attempted to do so without reworking the previous arguments within that work, but it has been said that he was wholly unsuccessful. The new view states that when we hear a sound, we hear something from the external world; there are no physical phenomena of internal perception… – source

You must be logged in to post a comment.