Posts Tagged ‘society’

“If I only had a brain”*…

The estimable Brad DeLong ponders the Discovery Channel series Naked and Afraid, concluding that “the Scarecrow in The Wizard of Oz had a greatly exaggerated view of what he would have been able to do if he only had a brain”…

There is a shlock TV show, on the Discovery Channel, called “Naked & Afraid”.

In it, two humans are dropped into a wilderness somewhere, naked, with one and only one piece of technology each (usually something like a knife, a fire starter, or a fishing line). All around them are other mammals doing their mammal thing: living their lives, reproducing their populations, evolving to fit whatever niche they have found where they are. But the two humans dropped by themselves (well, they are surrounded by cameramen, sound technician, drivers, logistical support, and such who do not help and who stay out of the field of view) do not. Instead, the humans proceed, not too slowly, to start starving to death.

I am not being figurative or metaphorical…

[DeLong details the altogether dire deterioration and resulting ailments of two recent “contestents”…]

… Perhaps you just shrug your shoulders and say: “humans are relatively inept”… The other mammals out in the Amazon have been equipped by Darwin’s Daemon with teeth, claws, instincts, and brains that allow them to get into daily caloric balance. We don’t have much in the way of teeth and claws. We do have opposable thumbs. We do have big brains. They are supposed to compensate. But perhaps you shrug your shoulders and say: “they do not compensate very well”. For, out in the wilderness, Melissa Miller’s brain and thumbs failed at the one job for which Darwin’s Daemon gave them to us, for which other mammals’ teeth, claws, instincts, sprinting speed, dodging quickness, and much smaller and thus less energetically expensive brains largely suffice.

The rule: a smart, knowledgeable human (or two) in the wilderness naked should be afraid: they are highly likely to start starving to death.

And yet: Somehow we are here. We have not all yet been eaten. We have been evolved evolved. Our ancestors survived, and reproduced.

Our ancestors started to come down from the trees about seven million years ago. That was when we left the ancestors of our chimpanzee cousins still up in the forest canopy.

By five million years ago, the ardipitheci were walking upright when they had to, with much smaller and less sexually-dimorphic canines, but as of them with no signs of fire or stone‑tool use or indeed of semi-systematic butchery. Their brain cases were only 350cc, only 350 cubic centimeters. By 3.5 million years ago, the autralopitheci afarenses were habitually walking on two legs with their 450cc brain-cases. By 2.5 million years ago, the homines habiles with their Oldowan stone toolkit and 650cc brain-cases were around. And paleontologists judge they deserve our genus name: homo. By 1.8 million years ago, there were the homines erecti spreading out across the world, with their Acheulean handaxes, their endurance walking/running, and their 950cc brain-cases. When we look back 600,000 years ago, the world was then populated by the likes of the homines heidelbergenses: widely-controlled fire; complex hunting with tools like spears.

These people were not yet us: Their brain-cases were only 3/4 of the size of our brain-cases of 1350cc. They did not have organized big‑game hunting with spears, complex prepared‑core toolmaking techniques, long‑distance mobility, or evidence of our sustained and cumulative symbolic culture—cave art and engravings, personal ornaments, ritual burials, complex language‑supported planning, long‑distance exchange networks, composite tools made with adhesives, tailored clothing, or shelters. They did not have the final brain expansion, the globular skull, the reduced brow, or the chin.

And between 300,000 and 200,000 years ago there emerged people we definitely call us: homines sapientes, albeit “archaic”, with our brain-case size of 1350cc, but without the fully globular skull, the reduced brow, or the chin.

From a chimpanzee-sized brain one-quarter the size of ours five million years ago to our current state, our ancestors and then we have been evolved. And now we are here. So how can there have been so much selection pressure for larger brains when, even today, out in the wilderness they are insufficient to keep us, when naked individuals, from being hungry and afraid?

You know where I am going here. The answer of course, is simple: What is smart—what the brain is good for—is not each of our brains, but all of our brains thinking together. And the tools that we, and those who came before us, have made—tools that no one individual could make in a lifetime, and that embody all of that thinking-together one. Melissa Miller is an expert on knives, how to use them, and what to use them for. She could not make one from scratch.

From long-ago Acheulean handaxes to contemporary hunger in the Amazon, the throughline is simple: selection favored group knowledge and group production by a pecialized division of labor, not solo genius. Our edge not only was and is not claws or speed, it was and is not the ability to think up clever solutions to problems on the fly. Instead, it was pooled memory and anthology thinking-power, plus the division of labor that allows us to carve tools that contain the results of that collective thinking-power…

“Does Each of Us Have a Big Enough Brain to Compensate for Our Lack of Fangs, Claws, Sprinting Speed, & Dodging Quickness?” from @delong.social.

* “the Scarecrow” in The Wizard of Oz

###

As we band together, we might recall that it was on this date in 1926 the Winnie-the-Pooh was first published…

The origin of the name of the bear that was stuffed with fluff began years before the book Winnie-the-Pooh by A.A. Milne [here] was published on this day in 1926. During the first World War, Canadian Lieutenant Harry Colebourn caught a bear and named her “Winnie” after his adopted hometown in Winnipeg, Manitoba. She was the brought to the London Zoo where Milne’s son, Christopher Robin would visit. [See also here.]

Christopher re-named his own teddy bear, Edward Bear, to Winnie-the-Pooh. His father named the characters in his book after Christopher’s stuffed animals including Piglet, Eeyore, Kanga, Roo and Tigger. (Mr. Milne added Owl and Rabbit).

In 1961, Walt Disney Productions bought the rights to the stories to create a series of cartoon shorts beginning with Winnie the Pooh and the Honey Tree which debuted in 1966. The last full-length animated movie from Disney, simply titled Winnie the Pooh, came out in theaters in 2011; the live action movie about the inspiration of the stories, Goodbye Christopher Robin by Fox Searchlight Pictures arrived in theaters in October of 2017 and Disney followed up with a live action/CGI about an adult Christopher Robin returning to the 100 Acre Wood in 2018.

– source

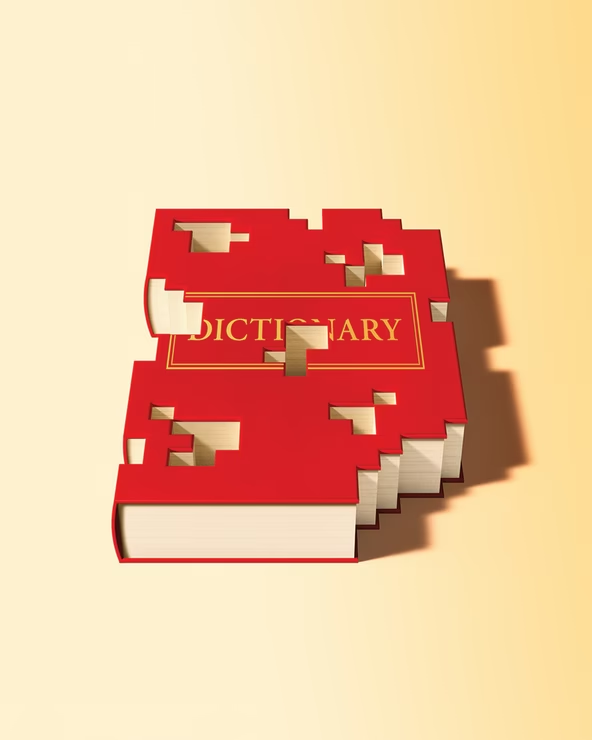

“I was reading the dictionary. I thought it was a poem about everything.”*…

“Obsolete (adj.): no longer in use or no longer useful”… Stefan Fatsis on the challenges faced by the purveyors of today’s dictionaries…

In 2015, I settled in at the Springfield, Massachusetts, headquarters of Merriam-Webster, America’s most storied dictionary company. My project was to document the ambitious reinvention of a classic, and I hoped to get some definitions of my own into the lexicon along the way. (A favorite early drafting effort, which I couldn’t believe wasn’t already included, was dogpile : “a celebration in which participants dive on top of each other immediately after a victory.”) Merriam-Webster’s overhaul of its signature work, Webster’s Third New International Dictionary, Unabridged—a 465,000-word, 2,700-page, 13.5-pound doorstop published in 1961 and never before updated—was already in full swing. The revision, which would be not a hardback book but an online-only edition, requiring a subscription, was expected to take decades.

Not long after my arrival, though, everything changed. Pageviews were declining for Merriam-Webster.com, the company’s free, ad-driven revenue engine: Tweaks to Google’s algorithms had punished Merriam’s search results. The company had always been lean and profitable, but the financial hit was real. Merriam’s parent, Encyclopedia Britannica, was facing challenges of its own—who needed an encyclopedia in a Wikipedia world?—and ordered cuts. Merriam laid off more than a dozen staffers. Its longtime publisher, John Morse, was forced into early retirement. The revision of Merriam’s unabridged masterpiece was abandoned.

Call it the paradox of the modern dictionary. We’re in a golden age for the study and appreciation of words—a time of “meta awareness” of language, as one lexicographer put it to me. Dictionaries are more accessible than ever, available on your laptop or phone. More people use them than ever, and dictionary publishers now possess the digital wherewithal to closely track that use. Podcasts, newsletters, and Words of the Year have popularized neologisms, etymologies, and usage trends. Meanwhile, analytical software has revolutionized linguistic inquiry, enabling greater understanding of the ways language works—when, how, and why words break out; the specific contexts for expressions and idioms. And all of that was true long before the rise of AI.

But these advances are also strangling the business of the dictionary. Definitions, professional and amateur, are a click away, and most people don’t care or can’t tell whether what pops up in a search is expert research, crowdsourced jottings, scraped data, or zombie websites. Before he left Merriam, Morse told me that legacy dictionaries face the same growing popular distrust of traditional authorities that media and government have encountered…

[Fatsis recounts the recent troubled commercial history of lexicography: Merriam, Dictionary.com, et al…]

It’s hard to know what future business model might save the industry. Getting swallowed by a tech giant expecting hockey-stick growth has proved untenable. A billionaire willing to let the dictionary just be the dictionary—a self-sustaining company with a modest staff performing an outsize cultural job that might not always be profitable—looks less likely after Dan Gilbert’s foray. A grand national dictionary project—some collaboration among government, private, nonprofit, and academic institutions—feels like the Platonic ideal. But with universities and intellectual inquiry under assault in 2025, I’m not holding my breath.

At Merriam-Webster, the standard capitalist model is working, at least for now, as is its hybrid print-digital approach. The publisher has rebounded from its mid-2010s struggles. It was a social-media darling during the first Trump administration, racking up likes and retweets for its smart-alecky and politically subversive social-media persona. (When Donald Trump tweeted “unpresidented” instead of “unprecedented,” the Merriam account responded: “Good morning! The #WordOfTheDay is … not ‘unpresidented’. We don’t enter that word. That’s a new one.”) Britannica invested in software, hardware, and humans to enable Merriam to better navigate Google’s algorithms. Merriam added a phalanx of games, including Wordle knockoffs and a dictionary-based crossword, to attract and retain visitors.

Merriam has outlasted a long line of American dictionaries. But plenty of household media names have been humbled by the shifting habits of digital consumers. Even before Google’s AI Overview began taking clicks from definitions written by flesh-and-bone lexicographers, the trajectory of the industry was clear.

After Merriam shut down its online unabridged revision, I stuck around the company’s 85-year-old brick headquarters, reporting and defining. I eventually drafted about 90 definitions. Most of them didn’t make the cut. But a handful are enshrined online, including politically charged terms such as microaggression and alt-right, and whimsicalones such as sheeple and, yes, dogpile.

While I’m proud of these small contributions to lexicography, my wanderings through dictionary culture convinced me of something far more important: the urgent need to save this slowly fading business. Twenty years ago, an estimated 200 full-time commercial lexicographers were working in the United States; today the number is probably less than a quarter of that. At a time when contentious words dominate our conversations—think insurrection and fascism and fake news and woke—the need for dictionaries to chronicle and explain language, and serve as its watchdog, has never been greater…

Adapted from Fatsis” new book, Unabridged- The Thrill of and Threat to the Modern Dictionary: “Is This the End of the Dictionary?” @stefanfatsis.bsky.social in @theatlantic.com.

* Steven Wright

###

As we look it up, we might we might send carefully-chosen words of birthday greeting to William Cuthbert Faulkner; he was born on this date in 1897. A writer of novels, short stories, poetry, essays, screenplays, and one play, Faulkner is best remembered for his novels (e.g., The Sound and the Fury, As I Lay Dying, and Light in August) and stories set in “Yoknapatawpha County,” a setting largely based on Lafayette County, Mississippi, where Faulkner spent most of his life. They earned him the 1949 Nobel Prize for Literature.

Faulkner inadvertently expressed (what would pass in the context on the piece above for) confidence in the longevity of Ernest Hemingway’s work: in 1951 he observed that “he has never been known to use a word that might send a reader to the dictionary.”

On the other hand…

The past is never dead. It’s not even past.

From Requiem for a Nun, Act I, Scene III, by William Faulkner

“When you come out of the storm, you won’t be the same person who walked in. That’s what this storm’s all about.”*…

Jack Goldstone and Peter Turchin have a theory, one that led them some years ago to predict political upheaval in America in the 2020s. Here, their explanation of why it’s here and what we can do to temper it…

Almost three decades ago, one of us, Jack Goldstone, published a simple model to determine a country’s vulnerability to political crisis. The model was based on how population changes shifted state, elite and popular behavior. Goldstone argued that, according to this Demographic-Structural Theory, in the 21st century, America was likely to get a populist, America-first leader who would sow a whirlwind of conflict.

Then ten years ago, the other of us, Peter Turchin, applied Goldstone’s model to U.S. history, using current data. What emerged was alarming: The U.S. was heading toward the highest level of vulnerability to political crisis seen in this country in over a hundred years. Even before Trump was elected, Turchin published his prediction that the U.S. was headed for the “Turbulent Twenties,” forecasting a period of growing instability in the United States and western Europe.

Given the Black Lives Matter protests and cascading clashes between competing armed factions in cities across the United States, from Portland, Oregon to Kenosha, Wisconsin, we are already well on our way there. But worse likely lies ahead.

Our model is based on the fact that across history, what creates the risk of political instability is the behavior of elites, who all too often react to long-term increases in population by committing three cardinal sins. First, faced with a surge of labor that dampens growth in wages and productivity, elitesseek to take a larger portion of economic gains for themselves, driving up inequality. Second, facing greater competition for elite wealth and status, they tighten up the path to mobility to favor themselves and their progeny. For example, in an increasingly meritocratic society, elites could keep places at top universities limited and raise the entry requirements and costs in ways that favor the children of those who had already succeeded.

Third, anxious to hold on to their rising fortunes, they do all they can to resist taxation of their wealth and profits, even if that means starving the government of needed revenues, leading to decaying infrastructure, declining public services and fast-rising government debts.

Such selfish elites lead the way to revolutions. They create simmering conditions of greater inequality and declining effectiveness of, and respect for, government. But their actions alone are not sufficient. Urbanization and greater education are needed to create concentrations of aware and organized groups in the populace who can mobilize and act for change.

Top leadership matters. Leaders who aim to be inclusive and solve national problems can manage conflicts and defer a crisis. However, leaders who seek to benefit from and fan political divisions bring the final crisis closer. Typically, tensions build between elites who back a leader seeking to preserve their privileges and reforming elites who seek to rally popular support for major changes to bring a more open and inclusive social order. Each side works to paint the other as a fatal threat to society, creating such deep polarization that little of value can be accomplished, and problems grow worse until a crisis comes along that explodes the fragile social order.

These were the conditions that prevailed in the lead-up to the great upheavals in political history, from the French Revolution in the eighteenth century, to the revolutions of 1848 and the U.S. Civil War in the nineteenth century, the Russian and Chinese revolutions of the twentieth century and the many “color revolutions” that opened the twenty-first century. So, it is eye-opening that the data show very similar conditions now building up in the United States…

They unpack their diagnosis, examine historical examples of successful– peaceful– resolution, and outline steps they recommend for a recovery from the hole we’ve dug for ourselves: “Welcome To The ‘Turbulent Twenties’,” from @noemamag.com.

For another “big cycle” take: “It All Has Happened Before for the Same Reasons” from Ray Dalio (@raydalioofficial.bsky.social).

On on the subject of what happens if efforts to stem a turn to autocracy that would (Goldstone and Turchin argue) lead ultimately to revolution and systemic failure, an optimistic (?) view from Luke Kemp, author of Goliath’s Curse: The History and Future of Societal Collapse argues that “Collapse has historically benefited the 99%.”

And for a suggestion that history does indeed rhyme, a headline from 1939: “Goebbels Ends Careers of Five ‘Aryan’ Actors Who Made Witticisms About the Nazi Regime” (gift article from The New York Times).

* Haruki Murakami, Kafka on the Shore

###

As we batten down, we might recall that it was on this date in 1977, in the 5th season premiere of the series Happy Days, that a water-skiing “Fonzie” (Henry Winkler) jumped the shark— which has become a descriptive phrase for a creative work– or entity– that has evolved past its prime, that has reached a stage in which it has exhausted its core intent and is introducing new ideas that are discordant with or an extreme exaggeration (a caricature) of its original theme or purpose.

An example relevent to the piece linked above (in this case, of new, discordant ideas masquerading as “old” and authentic): “How Originalism Killed the Constitution,” from Jill Lepore.

“I think it would be a very good idea”*…

As we discuss global culture(s) or geo-politics, we often talk about “The West” (and the rest). In a review of Georgios Varouxakis‘ new book The West: The History of an Idea, Andrew Kaufmann reminds us that it’s important to interrogate that defining concept…

What is the West? Many take the idea for granted, but few can define it. In this meticulously researched, engaging, and sometimes bewildering new book, The West: The History of an Idea, intellectual historian Georgios Varouxakis takes readers on a two-centuries-long tour of the many uses, definitions, and redefinitions of the term. Along the way, readers may find their own long-held assumptions and stereotypes challenged and even undermined.

The book makes a number of arguments, but for the purposes of this review, it’s worth focusing on just a few major ones. The first and most innovative argument of the book is this: The idea of the West as a transnational sociopolitical community distinct from the rest of the world is more recent than we think. This idea received its first sophisticated and coherent articulation in the 1820s from French philosopher Auguste Comte.

While historians and other academics had long looked to past societies like ancient Athens or medieval Europe as representing the “West” against some “other,” Comte was the first to coherently put together a future-oriented political program to be adopted and followed. Most scholars locate the future-focused version of the West’s inauguration in the 1890s, when the idea was used to justify imperial and colonial expansion. By contrast, Varouxakis argues that Comte and his followers wanted to build a West that was anti-imperialist, committed to science and reason, liberated from dogmatic Christianity, and fueled by altruism and sympathy.

As a progressive positivist, Comte saw the “Western Republic” as a via media between a hyper nationalism (of the French variety) and an overly abstract universalism. He imagined a way station that transcended the parochialism of family and nation and would one day be realized and embraced all over the world, even if it would take a full seven centuries from his own writing to come to fruition (that was Comte’s timeline). Neither tied to a particular nation like France (although Paris would be the center of this Republic until Constantinople would replace it), nor embodied by an abstract and universal cosmopolitanism, the Western Republic (or l’Occident) would be set off against its Other—in particular, Russia and the Orient. Still, over time this republic would non-coercively welcome the rest of the world into its fold.

Contrary to a common conception of “the West,” it was not to be a society (or society of societies) committed to democracy, individualism, or liberalism. It was instead a rejection of the hyper-individualism of the modern period, and it was an attempt to recover an older other-centered ethic that had been lost to a prior age.

The second major argument Varouxakis presents is that despite this idea of a transnational West that had its origin in Comte’s work, and despite Comte’s legacy that his disciples clearly carried across continents and centuries, the history of the idea of the West since Comte is complicated and contested. Put another way, while the specter of Comte hovers over the entire narrative, his vision is not always fully realized, nor is the meaning of the term always stable. This complicated history manifests itself in a number of different ways and carries with it some significant implications…

… Many casual users of “Western Civilization” will often identify it as one and the same with liberal democracy. They often find that somehow and at some point Britain came to embrace the West as being just that—liberal and democratic. Varouxakis complicates this picture by showing that while a few liberal voices in Britain were certainly also champions of Western Civilization, the more consistent and coherent users of the term were disciples of Comte and therefore much more illiberal in their thinking…

… Or take the more familiar East vs. West framework we associate with the Cold War, where surely the fault lines of Eastern totalitarianism against Western liberal capitalism are clean and clear. But even here the history is complicated, as the period begins with the acknowledgement that it was indeed Soviet Russia that helped to save “western civilization.” Indeed, it took forty years of gradual evolution for the idea of the “West” to finally crystallize around the shared commitment to economic, religious, and political freedom over and against Soviet planned economies, state-sanctioned atheism, and one-party politics with no free and fair elections…

… Given the winding road of the history of the West, it is instructive that there seems to be something of a settlement on its meaning for today, even if there are differences in its application. This can be seen most clearly in Varouxakis’ penultimate chapter on the dispute between Samuel Huntington and Francis Fukuyama after the end of the Cold War. Fukuyama of course is well known for his view that the West—in its embrace of liberal democracy and capitalism—had now emerged triumphant over the defeated ideas of Marxist totalitarianism, which found its fullest expression in Soviet Russia of the East.

Samuel Huntington’s ideas of what the West embodied were not much different, but he diverged from Fukuyama in his vision of what the world’s future likely entailed. For Huntington, the coming years and decades would see a “clash of civilizations,” a conflict of the most basic sort between the West and the great civilizations of the world as we know it. He saw nothing certain about the global triumph of any particular civilizational expression, including the West. Indeed, Huntington contends that it is only the West that even believes in universal ideals, and that all of the non-Western civilizations—whether Chinese, Islamic, or otherwise—are all partial in their visions. Therefore, we see here in the latest debate about the West a return of the Comtean question: Will the West become a universal civilization, or will it endure as one of many civilizations forever in conflict with each other? While we may have some agreement on what the West stands for, we may have less confidence in its future in the world.

The history is complex, indeed. But Varouxakis also raises the question of whether Western Civilization—however one defines it—is something to defend in the first place. He considers this question several times in the book, but perhaps none more poignantly than in the Great War itself. For example, there were many who noted the hypocrisy of the “Western powers” that suddenly found common cause with the long-excluded Russia in their fight against Germany and the Central Powers. But perhaps more troubling is what it says about a civilization when it produces not the peace and altruism long promised by its founder, but instead destruction on a scale that had never been seen before in human history. One could likewise ask: What kind of civilization deliberately excludes and exploits the weakest members within its borders, such as in the treatment of African Americans in the United States and of those in the furthest regions of the colonial empires of Europe? This crisis of confidence and feeling of decline continued through the interwar years, as Oswald Spengler expresses in his Decline of the West, a fitting rejoinder to the optimism of Comte’s Western utopia.

And so, perhaps the best way to conclude for readers of all sorts—but especially Christians—is to offer two words of caution. The first is to those who would defend the “West” and “Western Civilization” as something either resonant with or even inspired by a Judeo-Christian worldview. And that word is simple: the origins of the idea of the West in one of its most dominant forms (the Comtean one) and in its subsequent historical uses is either non-Christian or even anti-Christian. Indeed, I went into the book expecting a heavy dose of Judeo-Christian connections to the idea of the West, and while the link is not completely absent, I was struck by its muted nature.

Besides the post-Christian progressive vision of Comte himself, consider the voice of Black writer Richard Wright as one representative example to follow in the Frenchman’s footsteps. As someone who identified with the West, he considered “the content of [his] Westernness [residing] fundamentally…in [his] secular outlook upon life.” The progress of the West would be realized the more it emancipated itself from the influence of “mystical powers” or the priests who would speak in their name. Armed with the tools of trial-and-error pragmatism, human life can be sustained without recourse to divine help. A West liberated from divine help is a West worth preserving, at least according to Wright.

Overall, the West as an idea has many champions who are quite open in their antipathy toward the Christian religion, and it would be foolish to ignore those influences on the meaning and use of the term for us today. Still, the second and final note I’d like to offer is a bit more optimistic. In the concluding chapter, Varouxakis urges readers to move from the parochialism of “Western” ideas to adopt a language that is universal in its appeal. What, after all, was so attractive about any of the Western projects that Varouxakis so painstakingly chronicles? It was always their global appeal.

Altruism, sympathy, love for others, freedom, individualism, democracy, capitalism. These are not ideals that belong to just a few but rightfully can be embraced by all of God’s creatures in different places, at different times, and in different ways. Certainly for Christians who embrace a global faith, the least we can do is see the inheritance of the “West,” however defined, as a mixed bag of common grace insights and ideas in rebellion against God, combined with the perspective that none of what is worth keeping in the West should ever be kept from those who would embrace its ideals…

Eminently worth reading in full: “The Idea of the West” from @mereorthodoxy.bsky.social.

For a look at the concept in current context/practice: “The Rest take on the West,” from @noemamag.com.

* Gandhi’s response when asked, “what do you think of western civilization?”

###

As we ponder perplexingly plastic paradigms, we might recall that it was on this date in 1957 that “Whole Lotta Shakin’ Going On” by Jerry Lee Lewis peaked at #3 on the US pop singles charts (though it topped the R&B and country charts shortly after). It was a cover of a 1955 release by Big Maybelle of a song written by Dave “Curlee” Williams (and sometimes also credited to James Faye “Roy” Hall). Lewis, with session drummer Jimmy Van Eaton and guitarist Roland Janes, had recorded the song at Sun Records in just one take.

You must be logged in to post a comment.