Posts Tagged ‘Ernest Hemingway’

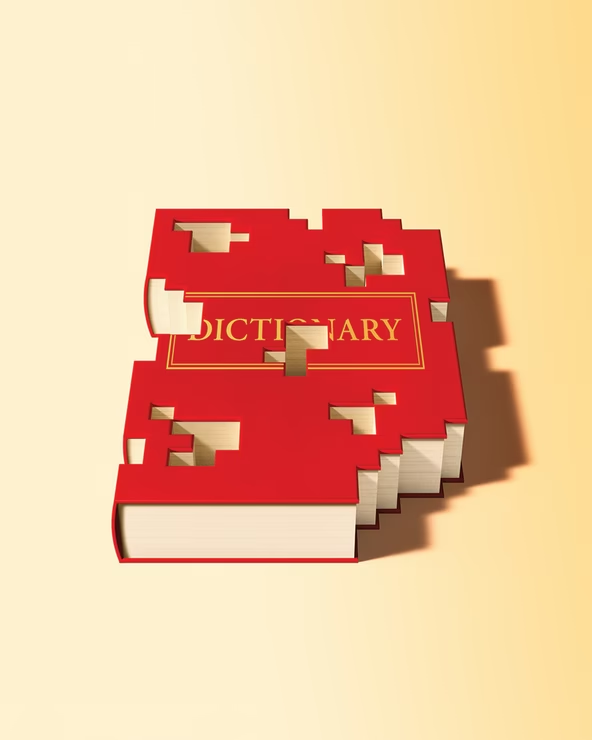

“I was reading the dictionary. I thought it was a poem about everything.”*…

“Obsolete (adj.): no longer in use or no longer useful”… Stefan Fatsis on the challenges faced by the purveyors of today’s dictionaries…

In 2015, I settled in at the Springfield, Massachusetts, headquarters of Merriam-Webster, America’s most storied dictionary company. My project was to document the ambitious reinvention of a classic, and I hoped to get some definitions of my own into the lexicon along the way. (A favorite early drafting effort, which I couldn’t believe wasn’t already included, was dogpile : “a celebration in which participants dive on top of each other immediately after a victory.”) Merriam-Webster’s overhaul of its signature work, Webster’s Third New International Dictionary, Unabridged—a 465,000-word, 2,700-page, 13.5-pound doorstop published in 1961 and never before updated—was already in full swing. The revision, which would be not a hardback book but an online-only edition, requiring a subscription, was expected to take decades.

Not long after my arrival, though, everything changed. Pageviews were declining for Merriam-Webster.com, the company’s free, ad-driven revenue engine: Tweaks to Google’s algorithms had punished Merriam’s search results. The company had always been lean and profitable, but the financial hit was real. Merriam’s parent, Encyclopedia Britannica, was facing challenges of its own—who needed an encyclopedia in a Wikipedia world?—and ordered cuts. Merriam laid off more than a dozen staffers. Its longtime publisher, John Morse, was forced into early retirement. The revision of Merriam’s unabridged masterpiece was abandoned.

Call it the paradox of the modern dictionary. We’re in a golden age for the study and appreciation of words—a time of “meta awareness” of language, as one lexicographer put it to me. Dictionaries are more accessible than ever, available on your laptop or phone. More people use them than ever, and dictionary publishers now possess the digital wherewithal to closely track that use. Podcasts, newsletters, and Words of the Year have popularized neologisms, etymologies, and usage trends. Meanwhile, analytical software has revolutionized linguistic inquiry, enabling greater understanding of the ways language works—when, how, and why words break out; the specific contexts for expressions and idioms. And all of that was true long before the rise of AI.

But these advances are also strangling the business of the dictionary. Definitions, professional and amateur, are a click away, and most people don’t care or can’t tell whether what pops up in a search is expert research, crowdsourced jottings, scraped data, or zombie websites. Before he left Merriam, Morse told me that legacy dictionaries face the same growing popular distrust of traditional authorities that media and government have encountered…

[Fatsis recounts the recent troubled commercial history of lexicography: Merriam, Dictionary.com, et al…]

It’s hard to know what future business model might save the industry. Getting swallowed by a tech giant expecting hockey-stick growth has proved untenable. A billionaire willing to let the dictionary just be the dictionary—a self-sustaining company with a modest staff performing an outsize cultural job that might not always be profitable—looks less likely after Dan Gilbert’s foray. A grand national dictionary project—some collaboration among government, private, nonprofit, and academic institutions—feels like the Platonic ideal. But with universities and intellectual inquiry under assault in 2025, I’m not holding my breath.

At Merriam-Webster, the standard capitalist model is working, at least for now, as is its hybrid print-digital approach. The publisher has rebounded from its mid-2010s struggles. It was a social-media darling during the first Trump administration, racking up likes and retweets for its smart-alecky and politically subversive social-media persona. (When Donald Trump tweeted “unpresidented” instead of “unprecedented,” the Merriam account responded: “Good morning! The #WordOfTheDay is … not ‘unpresidented’. We don’t enter that word. That’s a new one.”) Britannica invested in software, hardware, and humans to enable Merriam to better navigate Google’s algorithms. Merriam added a phalanx of games, including Wordle knockoffs and a dictionary-based crossword, to attract and retain visitors.

Merriam has outlasted a long line of American dictionaries. But plenty of household media names have been humbled by the shifting habits of digital consumers. Even before Google’s AI Overview began taking clicks from definitions written by flesh-and-bone lexicographers, the trajectory of the industry was clear.

After Merriam shut down its online unabridged revision, I stuck around the company’s 85-year-old brick headquarters, reporting and defining. I eventually drafted about 90 definitions. Most of them didn’t make the cut. But a handful are enshrined online, including politically charged terms such as microaggression and alt-right, and whimsicalones such as sheeple and, yes, dogpile.

While I’m proud of these small contributions to lexicography, my wanderings through dictionary culture convinced me of something far more important: the urgent need to save this slowly fading business. Twenty years ago, an estimated 200 full-time commercial lexicographers were working in the United States; today the number is probably less than a quarter of that. At a time when contentious words dominate our conversations—think insurrection and fascism and fake news and woke—the need for dictionaries to chronicle and explain language, and serve as its watchdog, has never been greater…

Adapted from Fatsis” new book, Unabridged- The Thrill of and Threat to the Modern Dictionary: “Is This the End of the Dictionary?” @stefanfatsis.bsky.social in @theatlantic.com.

* Steven Wright

###

As we look it up, we might we might send carefully-chosen words of birthday greeting to William Cuthbert Faulkner; he was born on this date in 1897. A writer of novels, short stories, poetry, essays, screenplays, and one play, Faulkner is best remembered for his novels (e.g., The Sound and the Fury, As I Lay Dying, and Light in August) and stories set in “Yoknapatawpha County,” a setting largely based on Lafayette County, Mississippi, where Faulkner spent most of his life. They earned him the 1949 Nobel Prize for Literature.

Faulkner inadvertently expressed (what would pass in the context on the piece above for) confidence in the longevity of Ernest Hemingway’s work: in 1951 he observed that “he has never been known to use a word that might send a reader to the dictionary.”

On the other hand…

The past is never dead. It’s not even past.

From Requiem for a Nun, Act I, Scene III, by William Faulkner

“We account the whale immortal in his species, however perishable in individuality”*…

A remarkable new study on how whales behaved when attacked by humans in the 19th century has implications for the way they react to changes wreaked by humans in the 21st century.

The paper, published by the Royal Society [in March], is authored by Hal Whitehead and Luke Rendell, pre-eminent scientists working with cetaceans, and Tim D Smith, a data scientist, and their research addresses an age-old question: if whales are so smart, why did they hang around to be killed? The answer? They didn’t.

Using newly digitised logbooks detailing the hunting of sperm whales in the north Pacific, the authors discovered that within just a few years, the strike rate of the whalers’ harpoons fell by 58%. This simple fact leads to an astonishing conclusion: that information about what was happening to them was being collectively shared among the whales, who made vital changes to their behaviour. As their culture made fatal first contact with ours, they learned quickly from their mistakes.

“Sperm whales have a traditional way of reacting to attacks from orca,” notes Hal Whitehead… Before humans, orca were their only predators, against whom sperm whales form defensive circles, their powerful tails held outwards to keep their assailants at bay. But such techniques “just made it easier for the whalers to slaughter them”, says Whitehead.

It was a frighteningly rapid killing, and it accompanied other threats to the ironically named Pacific. From whaling and sealing stations to missionary bases, western culture was imported to an ocean that had remained largely untouched. As Herman Melville, himself a whaler in the Pacific in 1841, would write in Moby-Dick (1851): “The moot point is, whether Leviathan can long endure so wide a chase, and so remorseless a havoc.”

Sperm whales are highly socialised animals, able to communicate over great distances. They associate in clans defined by the dialect pattern of their sonar clicks. Their culture is matrilinear, and information about the new dangers may have been passed on in the same way whale matriarchs share knowledge about feeding grounds. Sperm whales also possess the largest brain on the planet. It is not hard to imagine that they understood what was happening to them.

The hunters themselves realised the whales’ efforts to escape. They saw that the animals appeared to communicate the threat within their attacked groups. Abandoning their usual defensive formations, the whales swam upwind to escape the hunters’ ships, themselves wind-powered. ‘This was cultural evolution, much too fast for genetic evolution,’ says Whitehead.

And in turn, it evokes another irony. Now, just as whales are beginning to recover from the industrial destruction by 20th-century whaling fleets – whose steamships and grenade harpoons no whale could evade – they face new threats created by our technology. ‘They’re having to learn not to get hit by ships, cope with the depredations of longline fishing, the changing source of their food due to climate change,’ says Whitehead. Perhaps the greatest modern peril is noise pollution, one they can do nothing to evade.

Whitehead and Randall have written persuasively of whale culture, expressed in localised feeding techniques as whales adapt to shifting sources, or in subtle changes in humpback song whose meaning remains mysterious. The same sort of urgent social learning the animals experienced in the whale wars of two centuries ago is reflected in the way they negotiate today’s uncertain world and what we’ve done to it.

As Whitehead observes, whale culture is many millions of years older than ours. Perhaps we need to learn from them as they learned from us…

Learning from the ways that whales learn: “Sperm whales in 19th century shared ship attack information.”

* Herman Melville, Moby-Dick

###

As we admire adaptation, we might recall that it was on this date in 1953 that Ernest Hemingway won the Pulitzer Prize for his short novel The Old Man and the Sea. It was cited by the Nobel Committee as contributing to their awarding of the Nobel Prize in Literature to Hemingway the following year.

The Old Man and the Sea reinvigorated Hemingway’s literary reputation and prompted a reexamination of his entire body of work. The novel was initially received with much enthusiasm by critics and the public alike; many critics favorably compared it with Moby-Dick.

“Everything is relative except relatives, and they are absolute”*…

Thanksgiving is upon us, so many American readers will be gathering as clans. Thankfully, our friends at Flowing Data have come up with a handy graphic reference to help us place and navigate those confusing familial ties. As they note (quoting Wikipedia), there is an underlying mathematical logic to it all…

There is a mathematical way to identify the degree of cousinship shared by two individuals. In the description of each individual’s relationship to the most recent common ancestor, each “great” or “grand” has a numerical value of 1. The following examples demonstrate how this is applied.

Example: If person one’s great-great-great-grandfather is person two’s grandfather, then person one’s “number” is 4 (great + great + great + grand = 4) and person two’s “number” is 1 (grand = 1). The smaller of the two numbers is the degree of cousinship. The two people in this example are first cousins. The difference between the two people’s “numbers” is the degree of removal. In this case, the two people are thrice (4 — 1 = 3) removed, making them first cousins three times removed.

More at “Chart of Cousins.”

* Alfred Stieglitz

###

As we pass the gravy, we might recall that it was on this date in 1912 that successful businessman Sherwood Anderson, then 36, left wife, family, and job in Elyria, Ohio, to become a writer. A novelist and short story writer, he’s best-known for the short story sequence Winesburg, Ohio, which launched his career, and for the novel Dark Laughter, his only bestseller. But his biggest impact was probably his formative influence on the next generation of American writers– William Faulkner, Ernest Hemingway, John Steinbeck, and Thomas Wolfe, among others– who cited Anderson as an important inspiration and model. (Indeed, Anderson was instrumental in gaining publication for Faulkner and Hemingway.)

Special Summer Cheesecake Edition…

From Flavorwire, “Vintage Photos of Rock Stars In Their Bathing Suits.”

(Special Seasonal Bonus: from Sylvia Plath and Anne Sexton to Ernest Hemingway and Scott Fitzgerald, “Take a Dip: Literary Greats In Their Bathing Suits.”)

As we reach for the Coppertone, we might might wish a soulful Happy Birthday to musician Isaac Hayes; he was born on this date in 1942. An early stalwart at legendary Stax Records (e.g., Hayes co-wrote and played on the Sam and Dave hits “Soul Man” and “Hold On, I’m Coming”), Hayes began to come into his own after the untimely demise of Stax’s headliner, Otis Redding. First with his album Hot Buttered Soul, then with the score– including most famously the theme– for Shaft, Hayes became a star, and a pillar of the more engaged Black music scene of the 70s. Hayes remained a pop culture force (e.g., as the voice of Chef on South Park) until his death in 2008. (Note: some sources give Hayes birth date as August 20; but county records in Covington, KY, his birthplace suggest that it was the 6th.)

Your correspondent is headed for his ancestral seat, and for the annual parole check-in and head-lice inspection that does double duty as a family reunion. Connectivity in that remote location being the challenged proposition that it is, these missives are likely to be in abeyance for the duration. Regular service should resume on or about August 16.

Meantime, lest readers be bored, a little something to ponder:

Depending who you ask, there’s a 20 to 50 percent chance that you’re living in a computer simulation. Not like The Matrix, exactly – the virtual people in that movie had real bodies, albeit suspended in weird, pod-like things and plugged into a supercomputer. Imagine instead a super-advanced version of The Sims, running on a machine with more processing power than all the minds on Earth. Intelligent design? Not necessarily. The Creator in this scenario could be a future fourth-grader working on a science project.

Oxford University philosopher Nick Bostrom argues that we may very well all be Sims. This possibility rests on three developments: (1) the aforementioned megacomputer. (2) The survival and evolution of the human race to a “posthuman” stage. (3) A decision by these posthumans to research their own evolutionary history, or simply amuse themselves, by creating us – virtual simulacra of their ancestors, with independent consciousnesses…

Read the full story– complete with a consideration of the more-immediate (and less-existentially-challenging) implications of “virtualization”– and watch the accompanying videos at Big Think… and channel your inner-Phillip K. Dick…

Y’all be good…

“First Impressions”…

… was the tentative title with which Jane Austen worked before she settled on Pride and Prejudice.

George Orwell’s publisher convinced him that “The Last Man in Europe” simply wasn’t going to send copies flying off booksellers’ shelves, convincing Orwell to switch to his back-up title, 1984.

Discover more literary “might-have-beens,” featuring F. Scott Fitzgerald, Ernest Hemingway, Joseph Heller, Bram Stoker, and others– at Mentalfloss.

As we think again about our vanity plate orders, we might recall that it was on this date in 1943 that then-26-year-old poet Robert Lowell, scion of an old Boston family that had included a President of Harvard, an ambassador to the Court of St. James, and the ecclesiastic who founded St. Marks School, was sentenced to jail for a year for evading the draft. An ardent pacifist, Lowell refused his service in objection to saturation bombing in Europe. He served his time in New York’s West Street jail.

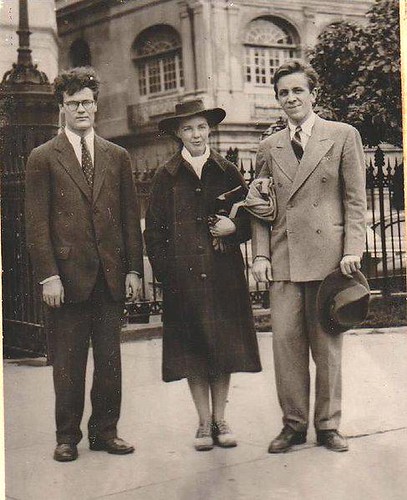

Lowell (left) in 1941, with (his then wife) novelist Jean Stafford, and their friend, novelist and short-story writer Peter Taylor, at Kenyon College, where they studied with John Crowe Ranson (source)

Lowell (left) in 1941, with (his then wife) novelist Jean Stafford, and their friend, novelist and short-story writer Peter Taylor, at Kenyon College, where they studied with John Crowe Ranson (source)

You must be logged in to post a comment.