Posts Tagged ‘data visualization’

“Without data, you’re just another person with an opinion”*…

… and that data can be even more useful if we can visualize it. Andrew Zolli introduces a new opportunity…

Whether we’re contending with food shocks, responding to disasters, preventing the next pandemic, helping communities adapt to a changing climate, or just delivering basic governmental services, one constant runs through it all: people. Where we live, how we move, when we gather or flee – these human patterns shape the arc of every modern challenge. Without a deep and dynamic understanding of those patterns, meaningful action becomes not just harder, it becomes guesswork.

That’s why I’m so excited about our ongoing collaboration with colleagues at the Microsoft AI for Good Lab and the Institute for Health Metrics and Evaluation to develop the world’s most up-to-date, highly accurate, high resolution #population density maps. Harnessing the power of Planet’s high-frequency, high-resolution satellite imagery, the AI for Good team’s artificial intelligence expertise, and IHME’s deep demographic modeling capabilities, these population maps allow us to estimate how many people we’re likely to find in every 40 sq meter patch of Earth, in every country of the world. And because the underlying data is updated quarterly, they also allow us to see change over time.

This week, we announced the completion of the first phase of this work at the United Nations AI For Good Global Summit, held in Geneva. We’ve been piloting the use of these population maps as part of the UN’s Early Warnings For All Initiative, which seeks to ensure that everyone on Earth is protected from hazardous weather, water, and climate events. In an early use-case, by overlaying population data with maps of mobile connectivity, we’ve been able to identify unconnected populations that might not be reachable in a crisis.

And that’s just one of what are likely hundreds – even thousands – of ways this kind of population data can be put to work. Knowing where people are settling, and how those patterns are changing, is foundational to everything from public health campaigns to the design of infrastructure and services. If we want to reduce wildfire risk, for example, we need to understand where human communities are pressing into forested frontiers. If we want to evacuate people ahead of an oncoming storm, we need to know how many lives are in harm’s way. And if we want to ensure people aren’t displaced by unlivable heat, we have to overlay human presence with climate exposure.

You can learn more and sign up to explore a coarser (but compelling!) (40km/pixel) visualization of the population data. At the AI for Good Summit, we also announced an Early Access Program for a carefully selected number of trusted organizations who will explore applications of the data and give feedback. If that sounds like it might be of interest, please contact services@healthdata.org…

A new tool for visualizing the world in which we live: “Everyone, Everywhere: Mapping Humanity’s Changing Footprint in Unprecedented Detail,” from @andrewzolli.bsky.social and his collegues at Planet.

###

As we get down with data, we might spare a thought for a spiritual ancestor of Planet’s, Denis Diderot; he died on this date in 1784. A philosopher, art critic, and writer, he is best known for serving as co-founder, chief editor, and contributor to the Encyclopédie along with Jean le Rond d’Alembert.

The Encyclopédie is most famous for representing the thought of the Enlightenment. According to Denis Diderot in the article “Encyclopédie”, the Encyclopédie‘s aim was “to change the way people think” and for people to be able to inform themselves and to know things. He and the other contributors advocated for the secularization of learning away from the Jesuits. Diderot wanted to incorporate all of the world’s knowledge into the Encyclopédie and hoped that the text could disseminate all this information to the public and future generations. Thus, it is an example of democratization of knowledge.

It was also the first encyclopedia to include contributions from many named contributors, and it was the first general encyclopedia to describe the mechanical arts. In the first publication, seventeen folio volumes were accompanied by detailed engravings. Later volumes were published without the engravings, in order to better reach a wide audience within Europe…

– source

“Read the best books first, or you may not have a chance to read them at all”*…

Ah, but “good”?… Past a certain level of quality, our definitions of “good”– that’s to say, the books that entertain and enlighten– vary for each of us. How to choose? Literature-Map is here to help…

The Literature-Map is part of Gnod, the Global Network of Discovery.

It is based on Gnooks, Gnod’s literature recommendation system. The more people like an author and another author, the closer together these two authors will move on the Literature-Map.

If you found a typo or a duplicate, please report it here.

Is an author missing on the map? Please vote for them here.

Want to jump to a random place on the map? Click here

Help in finding your next book: “The Literature-Map.”

* Henry David Thoreau, A Week on the Concord and Merrimack Rivers

###

As we turn the page, we might recall that this date in 1935 was a big one for the book business:

Allen Lane, Chairman of the London publisher The Bodley Head, was returning home after traveling with author Agatha Christie and her husband. At the train station, he browsed the kiosks looking for something to read on his way home. All he could find were magazines or low-quality paperback stories that he had no interest in reading. Then the thought occurred to him that people, like himself, might be more inclined to read good quality books [literature in paperback was then mainly poor quality lurid fiction] if they were more affordable. And since he was in the position to help build up lagging sales for his company, he ventured into printing previously hard-back books into a paperback format. The first was released on this day in 1935…

– source

Penguin Books featured no photos and were priced about a fifteenth the price of a hardcover book. The traditional book trade initially resisted; but the purchase of 63,000 books by Woolworths paid for the project outright, confirmed its worth, and allowed Lane to establish Penguin as a separate business in 1936. Indeed, by March 1936, ten months after the company’s launch, one million Penguin books had been printed.

[More here]

“Any chart, no matter how well designed, will mislead us if we don’t pay attention to it. The world cannot be understood without numbers. And it cannot be understood with numbers alone.”*…

Spencer Greenberg on the critical importance of thinking critically about the charts and graphs that we constantly consume…

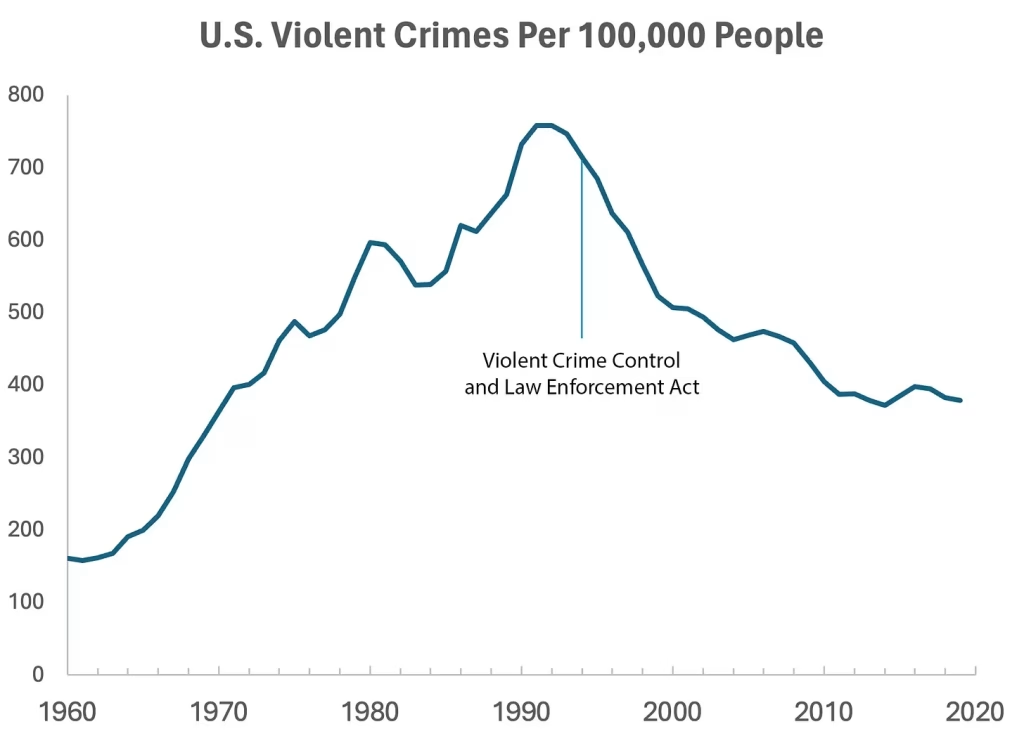

In 1994, the U.S. Congress passed the largest crime bill in U.S. history, called the Violent Crime Control and Law Enforcement Act. The bill allocated billions of dollars to build more prisons and hire 100,000 new police officers, among other things. In the years following the bill’s passage, violent crime rates in the U.S. dropped drastically, from around 750 offenses per 100,000 people in 1990 to under 400 in 2018.

But can we infer, as this chart seems to ask us to, that the bill caused the drop in crime?

As it turns out, this chart wasn’t put together by sociologists or political scientists who’ve studied violent crime. Rather, we—a mathematician and a writer—devised it to make a point: Although charts seem to reflect reality, they often convey narratives that are misleading or entirely false.

Upon seeing that violent crime dipped after 1990, we looked up major events that happened right around that time—selecting one, the 1994 Crime Bill, and slapping it on the graph. There are other events we could have stuck on the graph just as easily that would likely have invited you to construct a completely different causal story. In other words, the bill and the data in the graph are real, but the story is manufactured.

Perhaps the 1994 Crime Bill really did cause the drop in violent crime, or perhaps the causality goes the other way: the spike in violent crime motivated politicians to pass the act in the first place. (Note that the act was passed slightly after the violent crime rate peaked!)

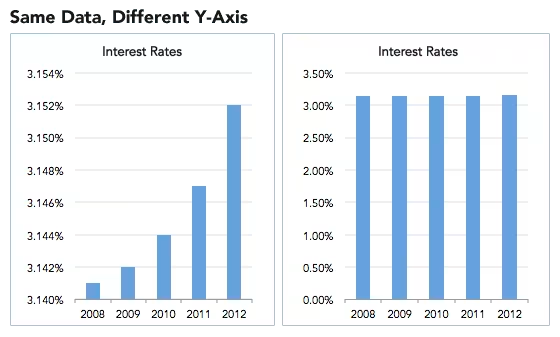

Charts are a concise way not only to show data but also to tell a story. Such stories, however, reflect the interpretations of a chart’s creators and are often accepted by the viewer without skepticism. As Noah Smith and many others have argued, charts contain hidden assumptions that can drastically change the story they tell.

This has important consequences for science, which, in its ideal form, attempts to report findings as objectively as possible. When a single chart can be the explanatory linchpin for years of scientific effort, unveiling a data visualization’s hidden assumptions becomes an essential skill for determining what’s really true. As physicist Richard Feynman once said: In science, “the first principle is that you must not fool yourself, and you are the easiest person to fool.”What we mean to say is—don’t be fooled by charts…

[Greenberg unpacks a couple of powerful examples…]

… to avoid producing a chart that misleads scientists, which misleads journalists, which misleads the public, and which then contributes to widespread confusion, you must think carefully about what you actually aim to measure. Which representation of the data best reflects the question being asked and relies on the sturdiest assumptions?

After all, scientific charts are a means to read data rather than an explanation of how that data is collected. The explanation comes from a careful reading of methods, parameters, definitions, and good epistemic practices like interrogating where data comes from and what could be motivating the researchers who produced it.

In the end, the story a chart tells is still just that—a story—and to be a discerning reader, you must reveal and interrogate the assumptions that steer those narratives…

Eminently worth reading in full: “How charts can inadvertently manipulate reality,” from @spencrgreenberg.bsky.social.

* Alberto Cairo, How Charts Lie: Getting Smarter about Visual Information

###

As we ferret out the facts, we might recall that it was on this date in 1874 that Florence Nightingale became the first female President of the Royal Statistical Society.

Famed for her work as a nurse in the Crimean War, she went on to found training facilities and nursing homes– pioneering both medical training for women and what is now known as Social Entrepreneuring. Less well-known are Nightingale’s contributions to epidemiology, statistics, and the visual communication of data in the field of public health. Always good at math, she pioneered the use of the polar area chart (the equivalent to a modern circular histogram or rose diagram) and popularized the pie chart (which had been developed in 1801 by William Playfair). Nightingale later became an honorary member of the American Statistical Association.

“Diagram of the causes of mortality in the army in the East” by Florence Nightingale, an example of the the polar area diagram (AKA, the Nightingale rose diagram) source

“The story of the events and the people who, over centuries, came together to bring us in from the cold and to wrap us in a warm blanket of technology is a matter of vital importance, since more and more of that technology infiltrates every aspect of our lives”*…

From Étienne Fortier-Dubois, the Historical Tech Tree, “a timeline to visualize the full history of all major technologies (or 1,780 of them, at least), from 3.3 million years ago to today. More importantly, it also contains more than 2,000 connections between them: prerequisites, improvements, inspirations: anything that allows you to understand how one thing led to another”…

The historical tech tree is a project by Étienne Fortier-Dubois to visualize the entire history of technologies, inventions, and (some) discoveries, from prehistory to today. Unlike other visualizations of the sort, the tree emphasizes the connections between technologies: prerequisites, improvements, inspirations, and so on.

These connections allow viewers to understand how technologies came about, at least to some degree, thus revealing the entire history in more detail than a simple timeline, and with more breadth than most historical narratives. The goal is not to predict future technology, except in the weak sense that knowing history can help form a better model of the world. Rather, the point of the tree is to create an easy way to explore the history of technology, discover unexpected patterns and connections, and generally make the complexity of modern tech feel less daunting….

How one thing led to another: “Historical Tech Tree.”

See also: “Introducing the Historical Tech Tree.”

* “The story of the events and the people who, over centuries, came together to bring us in from the cold and to wrap us in a warm blanket of technology is a matter of vital importance, since more and more of that technology infiltrates every aspect of our lives. It’s become a life-support system without which we can’t survive. And yet, how much of it do we understand?”- James Burke, in the first episode of Connections.

###

As we ponder progress, we might recall that it was on this date in 1633, following an Inquisition, that the Holy Office in Rome forced Galileo Galilei to recant his view that the Sun, not the Earth, is the center of the Universe in the form in which he presented it. Gaileo had used a telescope (1603 on the Timeline) to reach his (correct) conclusion.

He refused to recant, and put under house arrest, where he effectively remained for the rest of his life. He dedicated his time in restriction to one of his finest works, Two New Sciences, in which he summarised work he had done some forty years earlier, on the two sciences now called kinematics and strength of materials, and published in Holland to avoid the censor. As a result of this work– highly praised by Albert Einstein– Galileo is often called the “father of modern physics.”

“Only in our dreams are we free. The rest of the time we need wages.”*…

The Economist is repurposing one of its famous indices…

Since 1986 The Economist has produced the Big Mac index as a light-hearted gauge of whether currencies are at their “correct” level. The famous burger is a good test of currency valuations because of its global uniformity and ubiquity. The same properties make it a useful way of comparing international salaries: how many Big Macs, in principle, can a typical worker afford with their wages?

The more conventional way of comparing incomes is to convert wages in different countries into a common currency. But that is misleading because exchange rates are volatile. Moreover, one American dollar goes a lot farther in, say, the Philippines than it does in America itself. The Big Mac helps to solve this problem as a ready-made illustration of purchasing power: it represents a bundle of goods (or, rather, a bun of goods) that is identical everywhere, and so it serves as a yardstick of the real cost of things from country to country.

For the Big Mac wage analysis (the MacWage, for short), we started with full-time, pre-tax earnings in 2023 as reported by the OECD, a club of 38 mostly rich countries. We then made a simple adjustment, dividing wages by the price of a Big Mac—all in local currencies. That gave us the number of burgers that the average full-time worker can buy annually.

The results? Americans can perhaps be forgiven for having somewhat expansive waistlines. Although fast-food prices have rocketed since the pandemic, Americans still earn more greasy calories than any others in our analysis [chart below]. The average American worker takes home the equivalent of 14,000 Big Macs in wages for a year of full-time work. At 590 calories a pop, they could buy enough burgers to keep ten adults fed for a year. The Swiss and Danes come, respectively, second and third in MacWages. At the bottom are Mexican workers, who can afford to buy about 2,500 Big Macs with their average annual wages.

A standard objection to any measure of higher incomes in America is that its workers generally get less time off. To factor this in, we looked at average hours worked, based on data from the OECD and the Conference Board, a business-research group. This yields slightly different results (see chart 2). Americans still get more than enough Big Macs—pulling in the equivalent of about 7.4 per hour on the job—but they drop to third in the ranking. The burger champions are the Danes, who earn 8.1 per hour, followed by the Swiss. Looked at another way, the average Dane works for just seven minutes to make enough money to buy a Big Mac. In Mexico—still at the bottom of the rankings after this hourly adjustment—workers must toil for about 57 minutes.

The MacWage is, of course, far from perfect. Danes may celebrate their top performance, but our measure misses how income taxes (which can surpass 50% in Denmark) eat into their burger budgets. Much else of what goes into the cost of living, from housing to transportation, is also barely reflected in the price of burgers. In a developing country like Mexico, where housing is relatively cheap and American fast-food indulgences relatively expensive, a burger-based wage calculation understates how much stuff an average worker can actually afford. Still, as a quick method for comparing incomes around the world, the MacWage is easily digestible…

The purchasing power of average earners across the OECD: “An alternative use for The Economist’s Big Mac index” from @ECONdailycharts in @TheEconomist.

* Terry Pratchett

###

As we supersize that, we might recall that it was on this date in 1979 that the U.S. government agreed to a bailout of the Chrysler Corporation. The smallest of the “Big Three” automakers, but still the 10th largest company in America, Chrysler suffering from a combination a bad management decisions and increased competition from Japanese and German automakers. Facing a $500 million loss for the year (and probably bankruptcy), newly-installed CEO Lee Iacocca asked the government for a guarantee on a $1.5 Billion loan package. In return for detailed plans from Chrysler detailing both how the company would right its ship and how other constituents (employees, suppliers, lenders) would make concessions, the Carter Administration (which feared that a Chrysler failure could lead to a “depression”– and depression-level unemployment– in the auto industry) agreed. In return for its guarantee, the government received stock warrants in the company.

Chrysler did turn itself around: it proceeded to introduce the “K-Car” line, then mini-vans, then the earliest generation of SUVs. The company repaid the government-guaranteed debt ahead of schedule; the Treasury made about $500 million on its warrants.

But of course, nearly thirty years later, in 2008, Chrysler received billions in a new bailout from the U.S. government in the aftermath of the financial crisis that decimated automotive sales over the following few years. Chrysler filed for Chapter 11 bankruptcy in April 2009, before being acquired in total by Fiat in 2014.

You must be logged in to post a comment.