Posts Tagged ‘John Mauchly’

“Technology challenges us to assert our human values, which means that first of all, we have to figure out what they are”*…

As we head into the weekend, some food for thought…

A decade ago, the world was, at once, both the seed of today and a very different place: In what was considered one of the biggest political upsets in American political history (and the fifth and most recent presidential election in which the winning candidate lost the popular vote), Donald Trump was elected to his first term. The U.K. chose Brexit. The stock market finished strong, with the Dow Jones, S&P 500, and Nasdaq reaching new highs. (In the 10 years that have followed, the Dow has risen about 150%; the S&P 500, roughly 400%; and the NASDAQ has roughly sextupled.)

It was a big year for pop culture, marked by Beyoncé’s Lemonade, the massive Pokémon Go craze, and the rise of Netflix with Stranger Things, the Rio Olympics, and the loss of icons like David Bowie and Prince.

It was also a big year in tech: Russian hacking and disinfo (especially on Facebook) was a huge story– as was Apple’s elimination of the headphone jack in the iPhone 7. Theranos collapsed; and Wells fargo opened millions of accounts for customers without those customers’ permission (for which they were sunsequently fined $3 Billion). And Virtual Reality was everywhere (in the promises/offers from tech companies), but nowhere in the market. TikTok was launched in 2016, but hadn’t yet become the phenomenon (and avatar of algorithmly-driven feeds) that it has become. And in the course of 2016, artificial intelligence made the leap from “science fiction concept” to “almost meaningless buzzword” (though in fairness, 2016 was the year that Google DeepMind’s AlphaGo program triumphed against South Korean Go grandmaster Lee Sedol).

Back in 2016, the estimable Alan Jacobs was pondering the road ahead. In a piece for The New Atlantis, he coined and discussed a series of aphorisms relevant to the future as then he saw it. He begins…

Aphorisms are essentially an aristocratic genre of writing. The apho-

rist does not argue or explain, he asserts; and implicit in his assertion

is a conviction that he is wiser or more intelligent than his readers.

– W. H. Auden and Louis Kronenberger, The Viking Book of AphorismsAuthor’s Note: I hope that the statement above is wrong, believing that certain adjustments can be made to the aphoristic procedure that will rescue the following collection from arrogance. The trick is to do this in a way that does not sacrifice

the provocative character that makes the aphorism, at its best, such a powerful form of utterance.Here I employ two strategies to enable me to walk this tightrope. The first is to characterize the aphorisms as “theses for disputation,” à la Martin Luther — that is, I invite response, especially response in the form of disagreement or correction. The second is to create a kind of textual conversation, both on the page and beyond it, by adding commentary (often in the form of quotation) that elucidates each thesis, perhaps even increases its provocativeness, but never descends into coarsely explanatory pedantry…

[There follows a series of provocations and discussions that feel as relevant– and important– today as they were a decade ago. He concludes…]

… Precisely because of this mystery, we need to evaluate our technologies according to the criteria established by our need for “conviviality.”

I use the term with the particular meaning that Ivan Illich gives it in Tools for Conviviality [here]:

I intend it to mean autonomous and creative intercourse among per-

sons, and the intercourse of persons with their environment; and this

in contrast with the conditioned response of persons to the demands

made upon them by others, and by a man-made environment. I con-

sider conviviality to be individual freedom realized in personal inter-

dependence and, as such, an intrinsic ethical value. I believe that, in

any society, as conviviality is reduced below a certain level, no amount

of industrial productivity can effectively satisfy the needs it creates

among society’s members.In my judgment, nothing is more needful in our present technological moment than the rehabilitation and exploration of Illich’s notion of conviviality, and the use of it, first, to apprehend the tools we habitually employ and, second, to alter or replace them. For the point of any truly valuable critique of technology is not merely to understand our tools but to change them — and us…

Eminently worth reading in full, as its still all-too-relevant: “Attending to Technology- Theses for Disputation,” from @ayjay.bsky.social.

Pair with a provocative piece from another fan of Illich, L. M. Sacasas (@lmsacasas.bsky.social): “Surviving the Show: Illich And The Case For An Askesis of Perception.”

[Image above: source]

###

As we think about tech, we might recall that it was on this date in 1946 that an ancestor of today’s social networks, streaming services, and AIs, the ENIAC (Electronic Numerical Integrator And Computer), was first demonstrated in operation. (It was announced to the public the following day.) The first general-purpose computer (Turing-complete, digital, and capable of being programmed and re-programmed to solve different problems), ENIAC was begun in 1943, as part of the U.S’s war effort (as a classified military project known as “Project PX“); it was conceived and designed by John Mauchly and Presper Eckert of the University of Pennsylvania, where it was built. The finished machine, composed of 17,468 electronic vacuum tubes, 7,200 crystal diodes, 1,500 relays, 70,000 resistors, 10,000 capacitors and around 5 million hand-soldered joints, weighed more than 27 tons and occupied a 30 x 50 foot room– in its time the largest single electronic apparatus in the world. ENIAC’s basic clock speed was 100,000 cycles per second (or Hertz). Today’s home computers have clock speeds of 3,500,000,000 cycles per second or more.

“Man is not disturbed by events, but by the view he takes of them”*…

From Stripe Partners, a framework for rethinking the way we talk about the AI future…

AI is both a new technology and a new type of technology. It is the first technology that learns and that has the potential to outstrip its makers’ capabilities and develop independently.

As Large Language Models bring to life the realities of AI’s potential to operate at unprecedented, ‘human’ levels of sophistication, projections about its future have gained urgency. The dominant framework being applied to identify AI’s potential futures is 165 years old: Charles Darwin’s theory of evolution.

Darwin’s evolutionary framework is rendered most clearly in Dan Hendycks work for the Center for AI Safety which posits a future where natural selection could cause the most influential future AI agents to have selfish tendencies that might see AI’s favour their own agendas over the safety of humankind.

The choice of Natural Selection as a framework makes sense given AI’s emerging status as a quasi-sentient, highly adaptive technology that can learn and grow. The choice is a response to the limitations inherent in existing models for technological adoption which treat technologies as inert tools that only come to life when used by people.

The risk in applying this lens to AI is that it goes too far in assigning independent agency to AI. Estimates on the timing of the emergence of ‘Artificial General Intelligence’ vary, but spending some time with the current crop of Generative AI platforms confirms the view that AI’s with intelligences that are closer to humans are some way off. In the interim using natural selection as a lens to understand AI positions humans as further out of the developmental loop than is actually the case. Competitive forces whether market or military will shape AI’s development, but these will not be the only forces at play and direct interaction with humans will be the principal driver for AI’s progress in the near term.

A year ago we wrote about the opportunity to reframe the impact of AI on organisations through the lens of Actor Network Theory (ANT). More than a singular theory, ANT describes an approach to studying social and technological systems developed by Bruno Latour, Michel Callon, Madeleine Akrich and John Law in the early 1980s.

ANT posits that the social and natural world is best understood as dynamic networks of humans and nonhuman actors… In our 2023 piece we suggested that ANT, with its focus on framing society and human-technology interactions in terms of dynamic networks where every actor whether human or machine impacts the network, was a useful way of exploring the ways in which AI will impact people, and people will impact AI.

A year on the value of ANT as a framework for exploring AI’s future has become clearer. The critical point when comparing an ANT frame to an evolutionary one is the way in which the ANT framing highlights how AI will progress with and through people’s interactions with it. When viewed as an actor in a network, not a technology in isolation, AI will never be separate from human interventions…

A provocative argument, well worth reading in full: “Why the debate about the future of AI needs less Darwin and more Latour,” from @stripepartners.

Apposite: “Whose risks? Whose benefits?” from Mandy Brown.

* Epictetus

###

As we reframe, we might recall that it was on this date in 1946 that an ancestor of today’s AIs, the ENIAC (Electronic Numerical Integrator And Computer), was first demonstrated in operation. (It was announced to the public the following day.) The first general-purpose computer (Turing-complete, digital, and capable of being programmed and re-programmed to solve different problems), ENIAC was begun in 1943, as part of the U.S’s war effort (as a classified military project known as “Project PX“); it was conceived and designed by John Mauchly and Presper Eckert of the University of Pennsylvania, where it was built. The finished machine, composed of 17,468 electronic vacuum tubes, 7,200 crystal diodes, 1,500 relays, 70,000 resistors, 10,000 capacitors and around 5 million hand-soldered joints, weighed more than 27 tons and occupied a 30 x 50 foot room– in its time the largest single electronic apparatus in the world. ENIAC’s basic clock speed was 100,000 cycles per second (or Hertz). Today’s home computers have clock speeds of 3,500,000,000 cycles per second or more.

“We know the past but cannot control it. We control the future but cannot know it.”*…

Readers will know of your correspondent’s fascination with– and admiration for– Claude Shannon…

Within engineering and mathematics circles, Shannon is a revered figure. At 21 [in 1937], he published what’s been called the most important master’s thesis of all time, explaining how binary switches could do logic and laying the foundation for all future digital computers. At the age of 32, he published A Mathematical Theory of Communication, which Scientific American called “the Magna Carta of the information age.” Shannon’s masterwork invented the bit, or the objective measurement of information, and explained how digital codes could allow us to compress and send any message with perfect accuracy.

But Shannon wasn’t just a brilliant theoretical mind — he was a remarkably fun, practical, and inventive one as well. There are plenty of mathematicians and engineers who write great papers. There are fewer who, like Shannon, are also jugglers, unicyclists, gadgeteers, first-rate chess players, codebreakers, expert stock pickers, and amateur poets.

Shannon worked on the top-secret transatlantic phone line connecting FDR and Winston Churchill during World War II and co-built what was arguably the world’s first wearable computer. He learned to fly airplanes and played the jazz clarinet. He rigged up a false wall in his house that could rotate with the press of a button, and he once built a gadget whose only purpose when it was turned on was to open up, release a mechanical hand, and turn itself off. Oh, and he once had a photo spread in Vogue.

Think of him as a cross between Albert Einstein and the Dos Equis guy…

From Jimmy Soni (@jimmyasoni), co-author of A Mind At Play: How Claude Shannon Invented the Information Age: “11 Life Lessons From History’s Most Underrated Genius.”

* Claude Shannon

###

As we learn from the best, we might recall that it was on this date in 1946 that an early beneficiary of Shannon’s thinking, the ENIAC (Electronic Numerical Integrator And Computer), was first demonstrated in operation. (It was announced to the public the following day.) The first general-purpose computer (Turing-complete, digital, and capable of being programmed and re-programmed to solve different problems), ENIAC was begun in 1943, as part of the U.S’s war effort (as a classified military project known as “Project PX”); it was conceived and designed by John Mauchly and Presper Eckert of the University of Pennsylvania, where it was built. The finished machine, composed of 17,468 electronic vacuum tubes, 7,200 crystal diodes, 1,500 relays, 70,000 resistors, 10,000 capacitors and around 5 million hand-soldered joints, weighed more than 27 tons and occupied a 30 x 50 foot room– in its time the largest single electronic apparatus in the world. ENIAC’s basic clock speed was 100,000 cycles per second. Today’s home computers have clock speeds of 1,000,000,000 cycles per second.

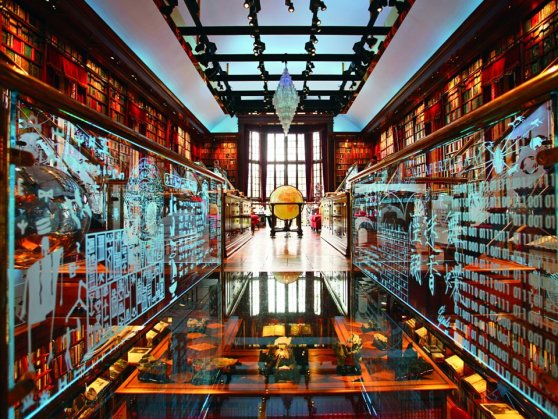

“It is likely that libraries will carry on and survive, as long as we persist in lending words to the world that surrounds us, and storing them for future readers”*…

Many visions of the future lie buried in the past. One such future was outlined by the American librarian Charles Ammi Cutter in his essay “The Buffalo Public Library in 1983”, written a century before in 1883.

Cutter’s fantasy, at times dry and descriptive, is also wonderfully precise:

The [library], when complete, was to consist of two parts, the first a central store, 150 feet square, a compact mass of shelves and passageways, lighted from the ends, but neither from sides nor top; the second an outer rim of rooms 20 feet wide, lighted from the four streets. In front and rear the rim was to contain special libraries, reading-rooms, and work-rooms; on the sides, the art-galleries. The central portion was a gridiron of stacks, running from front to rear, each stack 2 feet wide, and separated from its neighbor by a passage of 3 feet. Horizontally, the stack was divided by floors into 8 stories, each 8 feet high, giving a little over 7 feet of shelf-room, the highest shelf being so low that no book was beyond the reach of the hand. Each reading-room, 16 feet high, corresponded to two stories of the stack, from which it was separated in winter by glass doors.

The imagined structure allows for a vast accumulation of books:

We have now room for over 500,000 volumes in connection with each of the four reading-rooms, or 4,000,000 for the whole building when completed.

If his vision for Buffalo Public Library might be considered fairly modest from a technological point of view, when casting his net a little wider to consider a future National Library, one which “can afford any luxury”, things get a little more inventive.

[T]hey have an arrangement that brings your book from the shelf to your desk. You have only to touch the keys that correspond to the letters of the book-mark, adding the number of your desk, and the book is taken off the shelf by a pair of nippers and laid in a little car, which immediately finds its way to you. The whole thing is automatic and very ingenious…

But for Buffalo book delivery is a cheaper, simpler, and perhaps less noisy, affair.

…for my part I much prefer our pages with their smart uniforms and noiseless steps. They wear slippers, the passages are all covered with a noiseless and dustless covering, they go the length of the hall in a passage-way screened off from the desk-room so that they are seen only when they leave the stack to cross the hall towards any desk. As that is only 20 feet wide, the interruption to study is nothing.

Cutter’s fantasy might appear fairly mundane, born out of the fairly (stereo)typical neuroses of a librarian: in the prevention of all noise (through the wearing of slippers), the halting of the spread of illness (through good ventilation), and the disorder of the collection (through technological innovations)…

Far from a wild utopian dream, today Cutter’s library of the future appears basic: there will be books and there will be clean air and there will be good lighting. One wonders what Cutter might make of the library today, in which the most basic dream remains perhaps the most radical: for them to remain in our lives, free and open, clean and bright.

More at the original, in Public Domain Review: “The Library of the Future: A Vision of 1983 from 1883.” Read Cutter’s essay in its original at the Internet Archive.

Pair with “Libraries of the future are going to change in some unexpected ways,” in which IFTF Research Director (and Boing Boing co-founder) David Pescovitz describes a very different future from Cutter’s, and from which the image above was sourced.

The Library at Night

###

As we browse in bliss, we might recall that it was on this date in 1946 that the most famous early computer– the ENIAC (Electronic Numerical Integrator And Computer)– was dedicated. The first general-purpose computer (Turing-complete, digital, and capable of being programmed and re-programmed to solve different problems), ENIAC was begun in 1943, as part of the U.S’s war effort (as a classified military project known as “Project PX”); it was conceived and designed by John Mauchly and Presper Eckert of the University of Pennsylvania, where it was built. The finished machine, composed of 17,468 electronic vacuum tubes, 7,200 crystal diodes, 1,500 relays, 70,000 resistors, 10,000 capacitors and around 5 million hand-soldered joints, weighed more than 27 tons and occupied a 30 x 50 foot room– in its time the largest single electronic apparatus in the world. ENIAC’s basic clock speed was 100,000 cycles per second. Today’s home computers have clock speeds of 1,000,000,000 cycles per second.

51 areas, but not Area 51….

From the folks at Focus Research, a list of “51 Things You Aren’t Allowed to See on Google Maps“:

…for all of the places that Google Maps allows you to see, there are plenty of places that are off-limits. Whether it’s due to government restrictions, personal-privacy lawsuits or mistakes, Google Maps has slapped a “Prohibited” sign on the following 51 places.

1. The White House: Google Maps’ images of the White House show a digitally erased version of the roof in order to obscure the air-defense and security assets that are in place.

2. The U.S. Capitol: The U.S. Capitol has been fuzzy ever since Google Maps launched. Current versions of Google Maps and Google Earth show these sites uncensored, though with old pictures.

3. Dick Cheney’s [now Joe Biden’s] House: The Vice President’s digs at Number One Observatory Circle are obscured through pixelation in Google Earth and Google Maps at the behest of the U.S. government. However, high-resolution photos and aerial surveys of the property are readily available on other Web sites.

4. Soesterberg Air Base, in the Netherlands: This Dutch air-force base and former F-15 base for the U.S. Air Force during the Cold War can’t be seen via Google Maps.

5. PAVE PAWS in Cape Cod, Mass.: PAVE PAWS is the U.S. Air Force Space Command’s radar system for missile warning and space surveillance. There are two other installations besides the one in Cape Cod.

6. Shatt-Al-Arab Hotel in Basra, Iraq: This site was possibly censored after it was reported that terrorists who attacked the British at the hotel used aerial footage displayed by Google Earth to target their attacks.

7. Leeuwarden, Netherlands: This Dutch city is one of the main operating bases of the Royal Netherlands Air Force, part of NATO’s Joint Command Centre and one of three Joint Sub-Regional Commands of Allied Forces Northern Europe. Leeuwarden is also one of two regional headquarters of Allied Command Europe, headed by the Supreme Allied Commander Europe.

8. Reims Air Base in France: This lone building on Reims Air Base in France is blurred out.

9. Novi Sad: This military base in Serbia is off-limits.

10. Kamp van Zeist: Kamp van Zeist is a former U.S. Air Force base that was temporarily declared sovereign territory of the U.K. in 2000 in order to allow the Pan Am Flight 103 bombing trial to take place.

See the other 41 here… and note that, while (understandably) there’s no Street View photography available, Area 51 is on Google Maps.

As we unfold our maps, we might recall that it was on this date that, in 1952, the first UNIVAC, the world’s first commercially-produced electronic digital computer, was delivered to a customer– the Pentagon. UNIVAC, which stood for Universal Automatic Computer, was developed by J. Presper Eckert and John Mauchly, makers of ENIAC, the first general-purpose electronic digital computer (started by the U.S. Government in 1943 and finished in 1946) for use at Los Alamos and in other defense-related settings.

(In 1951,the U.S. Census Bureau “received” the first Univac, but it was operating at Remington Rand Labs; there was apprehension over disassembling and moving it… it finally did reach its home– then stayed in service long after it was obsoleted by advancing technology. Indeed, the Census Bureau used it until 1963– for twelve years.)

Eckert (center) demonstrating UNIVAC to Walter Cronkite (right)

Eckert (center) demonstrating UNIVAC to Walter Cronkite (right)

You must be logged in to post a comment.